Introduction to Couchbase Server¶

A newer version of this software is available

You are viewing the documentation for an older version of this software. To find the documentation for the current version, visit the Couchbase documentation home page.

Couchbase Server is a NoSQL document database for interactive web applications. It has a flexible data model, is easily scalable, provides consistent high performance and is ‘always-on,’ meaning it is can serve application data 24 hours, 7 days a week. Couchbase Server provides the following benefits:

-

Flexible Data Model

With Couchbase Server, you use JSON documents to represent application objects and the relationships between objects. This document model is flexible enough so that you can change application objects without having to migrate the database schema, or plan for significant application downtime. Even the same type of object in your application can have a different data structures. For instance, you can initially represent a user name as a single document field. You can later structure a user document so that the first name and last name are separate fields in the JSON document without any downtime, and without having to update all user documents in the system.

The other advantage in a flexible, document-based data model is that it is well suited to representing real-world items and how you want to represent them. JSON documents support nested structures, as well as field representing relationships between items which enable you to realistically represent objects in your application.

-

Easy Scalability

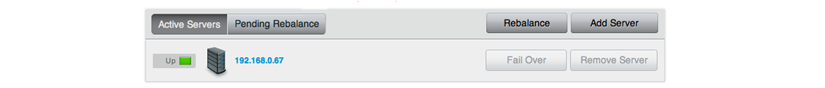

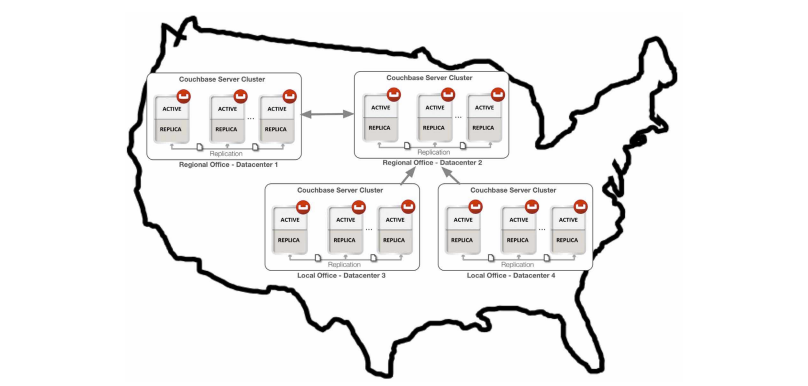

It is easy to scale your application with Couchbase Server, both within a cluster of servers and between clusters at different data centers. You can add additional instances of Couchbase Server to address additional users and growth in application data without any interruptions or changes in your application code. With one click of a button, you can rapidly grow your cluster of Couchbase Servers to handle additional workload and keep data evenly distributed.

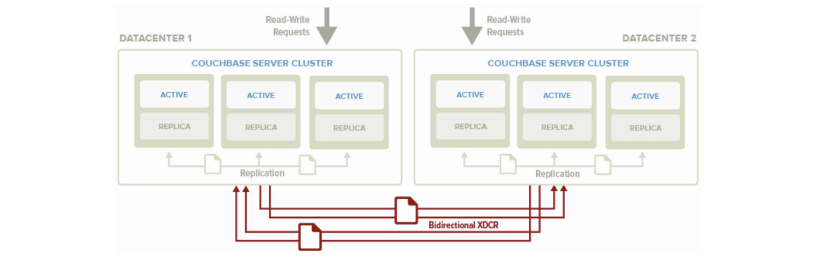

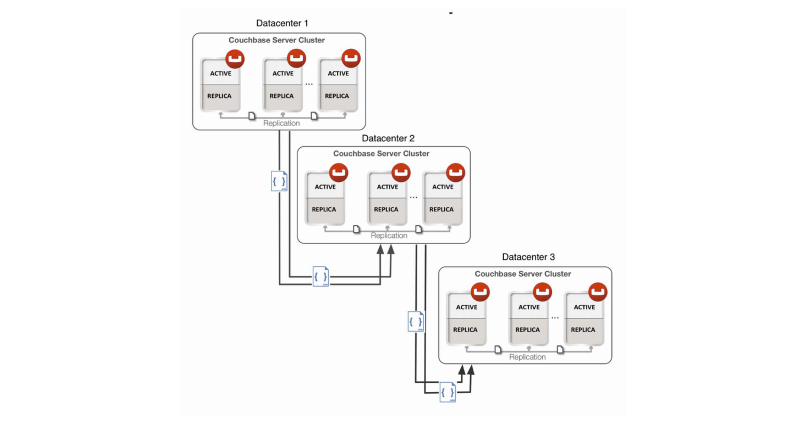

Couchbase Server provides automatic sharding of data and rebalancing at runtime; this lets you resize your server cluster on demand. Cross-data center replication providing in Couchbase Server 2.0 enables you to move data closer to your user at other data centers.

-

Consistent High Performance

Couchbase Server is designed for massively concurrent data use and consistent high throughput. It provides consistent sub-millisecond response times which help ensure an enjoyable experience for users of your application. By providing consistent, high data throughput, Couchbase Server enables you to support more users with less servers. The server also automatically spreads workload across all servers to maintain consistent performance and reduce bottlenecks at any given server in a cluster.

-

“Always Online”

Couchbase Server provides consistent sub-millisecond response times which help ensure an enjoyable experience for users of your application. By providing consistent, high data throughput, Couchbase Server enables you to support more users with less servers. The server also automatically spreads workload across all servers to maintain consistent performance and reduce bottlenecks at any given server in a cluster.

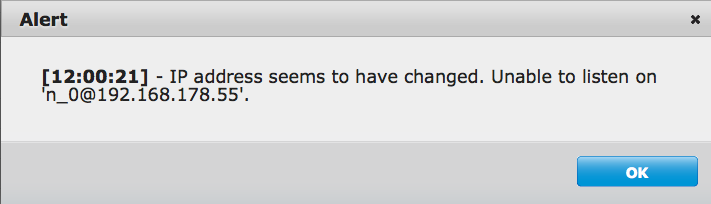

Features such as cross-data center replication and auto-failover help ensure availability of data during server or datacenter failure.

All of these features of Couchbase Server enable development of web applications where low–latency and high throughput are required by end users. Web applications can quickly access the right information within a Couchbase cluster and developers can rapidly scale up their web applications by adding servers.

Couchbase Server and NoSQL¶

NoSQL databases are characterized by their ability to store data without first requiring one to define a database schema. In Couchbase Server, you can store data as key-value pairs or JSON documents. Data does not need to confirm to a rigid, pre-defined schema from the perspective of the database management system. Due to this schema-less nature, Couchbase Server supports a scale out approach to growth, increasing data and I/O capacity by adding more servers to a cluster; and without any change to application software. In contrast, relational database management systems scale up by adding more capacity including CPU, memory and disk to accommodate growth.

Relational databases store information in relations which must be defined, or modified, before data can be stored. A relation is simply a table of rows, where each row in a given relation has a fixed set of columns. These columns are consistent across each row in a relation. Tables can be further connected through cross-table references. One table, could hold rows of all individual citizens residing in a town. Another table, could have rows consisting of parent, child and relationship fields. The first two fields could be references to rows in the citizens table while the third field describes the parental relationship between the persons in the first two fields such as father or mother.

Architecture and Concepts¶

In order to understand the structure and layout of Couchbase Server, you first need to understand the different components and systems that make up both an individual Couchbase Server instance, and the components and systems that work together to make up the Couchbase Cluster as a whole.

The following section provides key information and concepts that you need to understand the fast and elastic nature of the Couchbase Server database, and how some of the components work together to support a highly available and high performance database.

Nodes and Clusters¶

Couchbase Server can be used either in a standalone configuration, or in a cluster configuration where multiple Couchbase Servers are connected together to provide a single, distributed, data store.

In this description:

-

Couchbase Server or Node

A single instance of the Couchbase Server software running on a machine, whether a physical machine, virtual machine, EC2 instance or other environment.

All instances of Couchbase Server are identical, provide the same functionality, interfaces and systems, and consist of the same components.

-

Cluster

A cluster is a collection of one ore more instances of Couchbase Server that are configured as a logical cluster. All nodes within the cluster are identical and provide the same functionality. Each node is capable of managing the cluster and each node can provide aggregate statistics and operational information about the cluster. User data is stored across the entire cluster through the vBucket system.

Clusters operate in a completely horizontal fashion. To increase the size of a cluster, you add another node. There are no parent/child relationships or hierarchical structures involved. This means that Couchbase Server scales linearly, both in terms of increasing the storage capacity and the performance and scalability.

Cluster Manager¶

Every node within a Couchbase Cluster includes the Cluster Manager component. The Cluster Manager is responsible for the following within a cluster:

Cluster management

Node administration

Node monitoring

Statistics gathering and aggregation

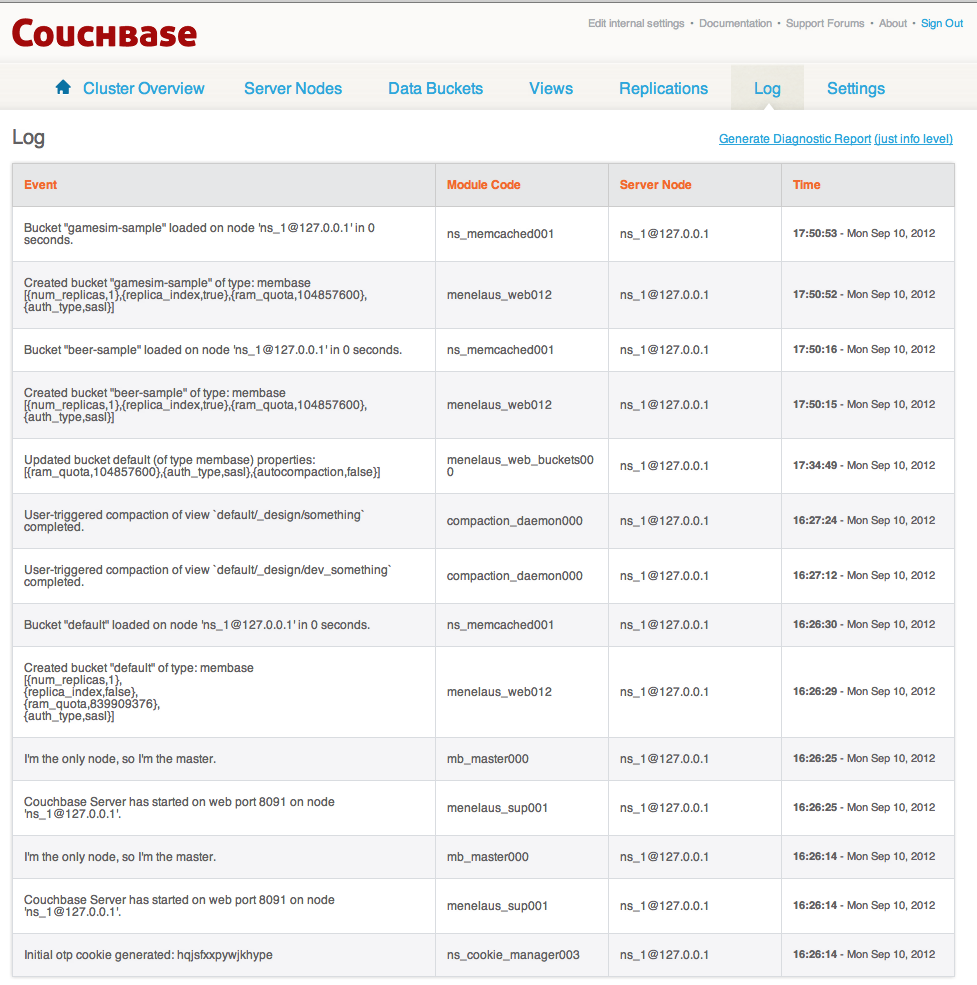

Run-time logging

Multi-tenancy

Security for administrative and client access

Client proxy service to redirect requests

Access to the Cluster Manager is provided through the administration interface (see Administration Tools ) on a dedicated network port, and through dedicated network ports for client access. Additional ports are configured for inter-node communication.

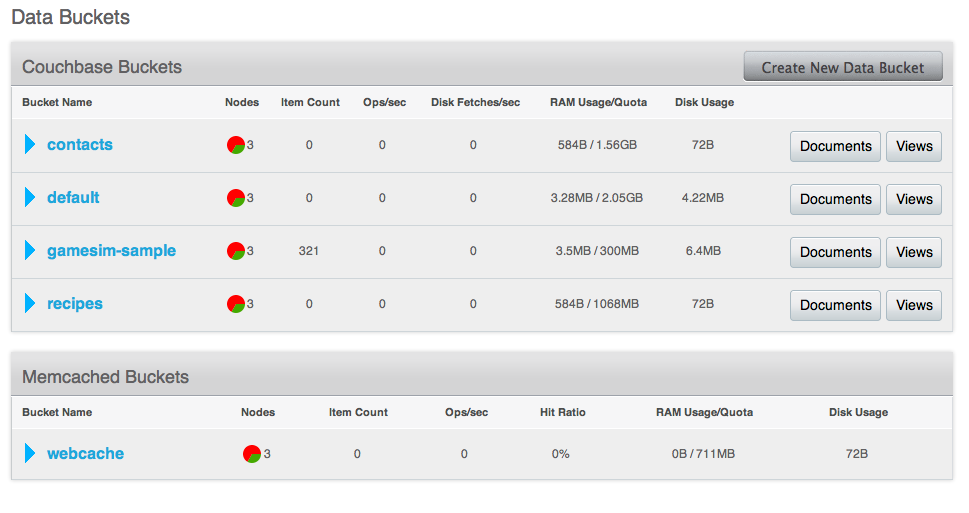

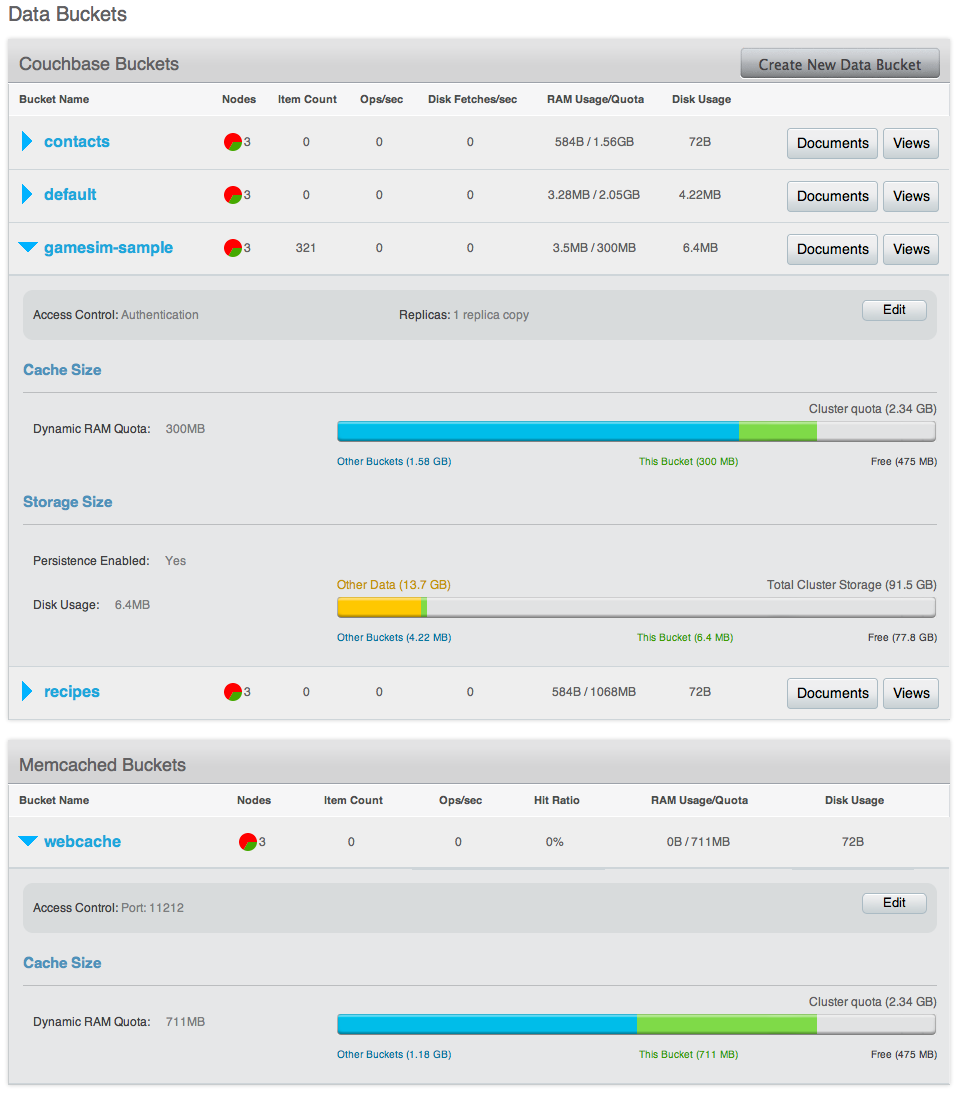

Data Storage¶

Couchbase Server provides data management services using buckets ; these are isolated virtual containers for data. A bucket is a logical grouping of physical resources within a cluster of Couchbase Servers. They can be used by multiple client applications across a cluster. Buckets provide a secure mechanism for organizing, managing, and analyzing data storage resources.

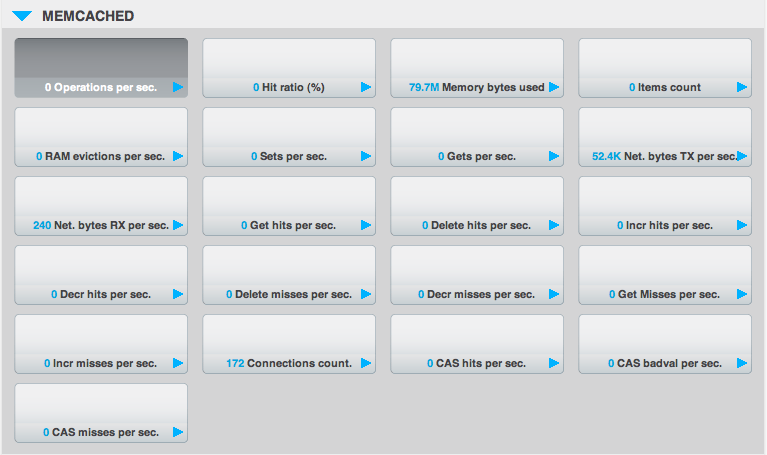

There are two types of data bucket in Couchbase Server: 1) memcached buckets, and 2) couchbase buckets. The two different types of buckets enable you to store data in-memory only, or to store data in-memory as well as on disk for added reliability. When you set up Couchbase Server you can choose what type of bucket you need in your implementation:

| Bucket Type | Description |

|---|---|

| Couchbase | Provides highly-available and dynamically reconfigurable distributed data storage, providing persistence and replication services. Couchbase buckets are 100% protocol compatible with, and built in the spirit of, the memcached open source distributed key-value cache. |

| Memcached | Provides a directly-addressed, distributed (scale-out), in-memory, key-value cache. Memcached buckets are designed to be used alongside relational database technology – caching frequently-used data, thereby reducing the number of queries a database server must perform for web servers delivering a web application. |

The different bucket types support different capabilities. Couchbase-type buckets provide a highly-available and dynamically reconfigurable distributed data store. Couchbase-type buckets survive node failures and allow cluster reconfiguration while continuing to service requests. Couchbase-type buckets provide the following core capabilities.

| Capability | Description |

|---|---|

| Caching | Couchbase buckets operate through RAM. Data is kept in RAM and persisted down to disk. Data will be cached in RAM until the configured RAM is exhausted, when data is ejected from RAM. If requested data is not currently in the RAM cache, it will be loaded automatically from disk. |

| Persistence | Data objects can be persisted asynchronously to hard-disk resources from memory to provide protection from server restarts or minor failures. Persistence properties are set at the bucket level. |

| Replication | A configurable number of replica servers can receive copies of all data objects in the Couchbase-type bucket. If the host machine fails, a replica server can be promoted to be the host server, providing high availability cluster operations via failover. Replication is configured at the bucket level. |

| Rebalancing | Rebalancing enables load distribution across resources and dynamic addition or removal of buckets and servers in the cluster. |

| Capability | memcached Buckets | Couchbase Buckets |

|---|---|---|

| Item Size Limit | 1 MByte | 20 MByte |

| Persistence | No | Yes |

| Replication | No | Yes |

| Rebalance | No | Yes |

| Statistics | Limited set for in-memory stats | Full suite |

| Client Support | Memcached, should use Ketama consistent hashing | Full Smart Client Support |

There are three bucket interface types that can be be configured:

-

The default Bucket

The default bucket is a Couchbase bucket that always resides on port 11211 and is a non-SASL authenticating bucket. When Couchbase Server is first installed this bucket is automatically set up during installation. This bucket may be removed after installation and may also be re-added later, but when re-adding a bucket named “default”, the bucket must be place on port 11211 and must be a non-SASL authenticating bucket. A bucket not named default may not reside on port 11211 if it is a non-SASL bucket. The default bucket may be reached with a vBucket aware smart client, an ASCII client or a binary client that doesn’t use SASL authentication.

-

Non-SASL Buckets

Non-SASL buckets may be placed on any available port with the exception of port 11211 if the bucket is not named “default”. Only one Non-SASL bucket may placed on any individual port. These buckets may be reached with a vBucket aware smart client, an ASCII client or a binary client that doesn’t use SASL authentication

-

SASL Buckets

SASL authenticating Couchbase buckets may only be placed on port 11211 and each bucket is differentiated by its name and password. SASL bucket may not be placed on any other port beside 11211. These buckets can be reached with either a vBucket aware smart client or a binary client that has SASL support. These buckets cannot be reached with ASCII clients.

Smart clients discover changes in the cluster using the Couchbase Management REST API. Buckets can be used to isolate individual applications to provide multi-tenancy, or to isolate data types in the cache to enhance performance and visibility. Couchbase Server allows you to configure different ports to access different buckets, and gives you the option to access isolated buckets using either the binary protocol with SASL authentication, or the ASCII protocol with no authentication

Couchbase Server enables you to use and mix different types of buckets, Couchbase and Memcached, as appropriate in your environment. Buckets of different types still share the same resource pool and cluster resources. Quotas for RAM and disk usage are configurable per bucket so that resource usage can be managed across the cluster. Quotas can be modified on a running cluster so that administrators can reallocate resources as usage patterns or priorities change over time.

For more information about creating and managing buckets, see the following resources:

Bucket RAM Quotas: see Changing Couchbase Quotas.

Creating and Managing Buckets with Couchbase Web Console: see Viewing Data Buckets.

Creating and Managing Buckets with Couchbase REST API: see Managing Buckets.

Creating and Managing Buckets with Couchbase CLI (Command-Line Tool): see couchbase-cli Tool.

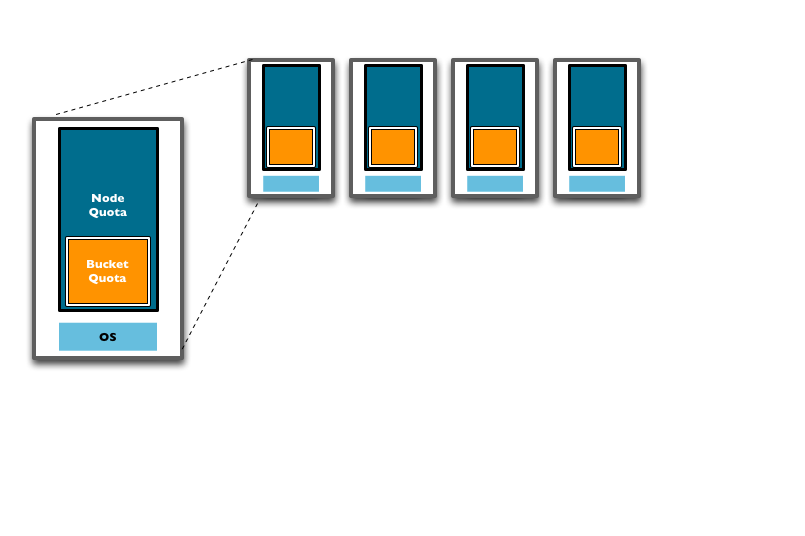

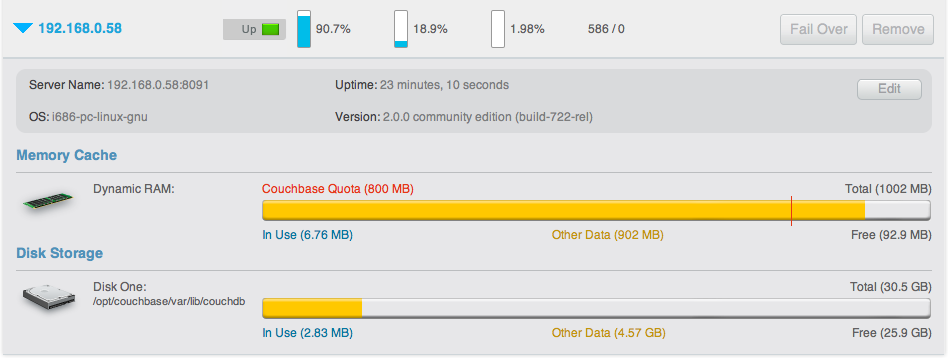

RAM Quotas¶

RAM is allocated to Couchbase Server in two different configurable quantities,

the Server Quota and Bucket Quota. For more information about creating and

changing these two settings, see Other couchbase-cli

Usage and Creating and Editing Data

Buckets.

-

Server Quota

The Server Quota is the RAM that is allocated to the server when Couchbase Server is first installed. This sets the limit of RAM allocated by Couchbase for caching data for all buckets and is configured on a per-node basis. The Server Quota is initially configured in the first server in your cluster is configured, and the quota is identical on all nodes. For example, if you have 10 nodes and a 16GB Server Quota, ther is 160GB RAM available across the cluster. If you were to add two more nodes to the cluster, the new nodes would need 16GB of free RAM, and the aggregate RAM available in the cluster would be 192GB.

-

Bucket Quota

The Bucket Quota is the amount of RAM allocated to an individual bucket for caching data. Bucket quotas are configured on a per-node basis, and is allocated out of the RAM defined by the Server Quota. For example, if you create a new bucket with a Bucket Quota of 1GB, in a 10 node cluster there would be an aggregate bucket quota of 10GB across the cluster. Adding two nodes to the cluster would extend your aggregate bucket quota to 12GB.

From this description and diagram, you can see that adding new nodes to the cluster expands the overal RAM quota, and the bucket quota, increasing the amount of information that can be kept in RAM.

The Bucket Quota is used by the system to determine when data should beejected from memory. Bucket Quotas are dynamically configurable within the limit of your Server Quota, and enable you to individually control the caching of information in memory on a per bucket basis. You can therefore configure different buckets to cope with your required caching RAM allocation requirements.

The Server Quota is also dynamically configurable, but care must be taken to ensure that the nodes in your cluster have the available RAM to support your chosen RAM quota configuration.

For more information on changing Couchbase Quotas, see Changing Couchbase Quotas.

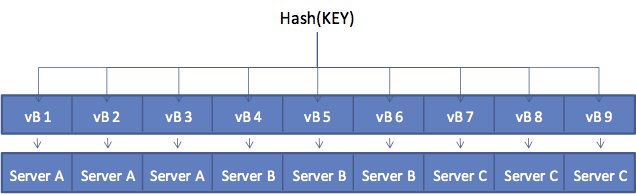

vBuckets¶

A vBucket is defined as the owner of a subset of the key space of a Couchbase cluster. These vBuckets are used to allow information to be distributed effectively across the cluster. The vBucket system is used both for distributing data, and for supporting replicas (copies of bucket data) on more than one node.

Clients access the information stored in a bucket by communicating directly with the node response for the corresponding vBucket. This direct access enables clients to communicate with the node storing the data, rather than using a proxy or redistribution architecture. The result is abstracting the physical toplogy from the logical partitioning of data. This architecture is what gives Coucbase Server the elasticity.

This architecture differs from the method used by memcached, which uses

client-side key hashes to determine the server from a defined list. This

requires active management of the list of servers, and specific hashing

algorithms such as Ketama to cope with changes to the topology. The structure is

also more flexible and able to cope with changes than the typical sharding

arrangement used in an RDBMS environment.

vBuckets are not a user-accessible component, but they are a critical component of Couchbase Server and are vital to the availability support and the elastic nature.

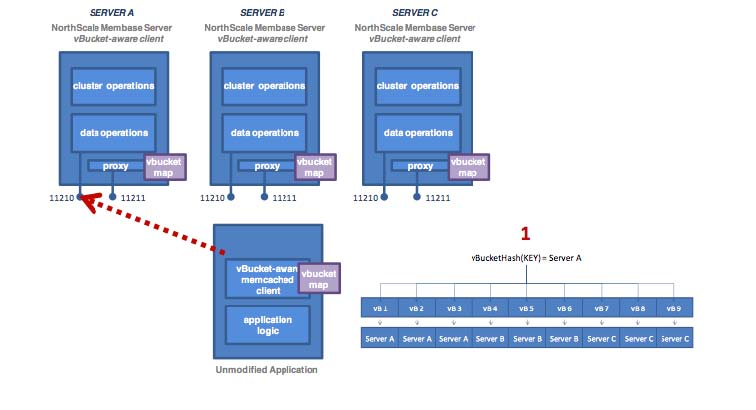

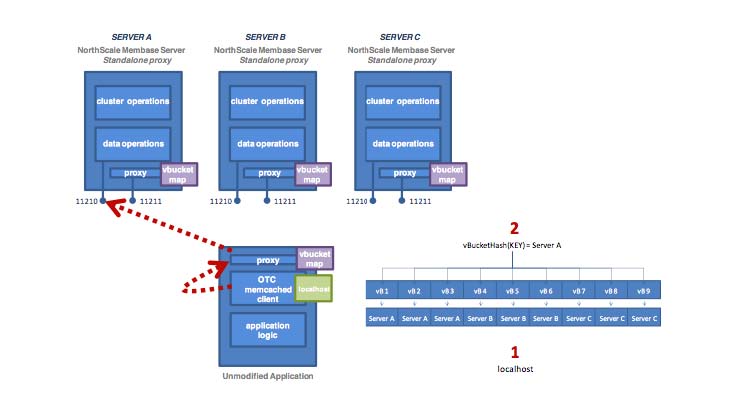

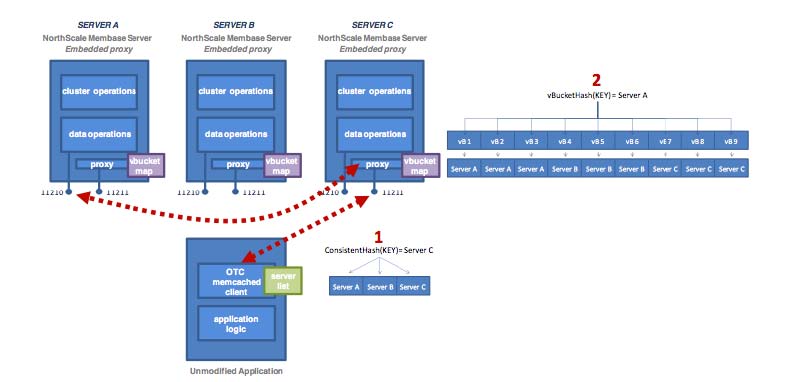

Every document ID belongs to a vBucket. A mapping function is used to calculate the vBucket in which a given document belongs. In Couchbase Server, that mapping function is a hashing function that takes a document ID as input and outputs a vBucket identifier. Once the vBucket identifier has been computed, a table is consulted to lookup the server that “hosts” that vBucket. The table contains one row per vBucket, pairing the vBucket to its hosting server. A server appearing in this table can be (and usually is) responsible for multiple vBuckets.

The diagram below shows how the Key to Server mapping (vBucket map) works. There

are three servers in the cluster. A client wants to look up ( get ) the value

of KEY. The client first hashes the key to calculate the vBucket which owns KEY.

In this example, the hash resolves to vBucket 8 ( vB8 ) By examining the

vBucket map, the client determines Server C hosts vB8. The get operation is

sent directly to Server C.

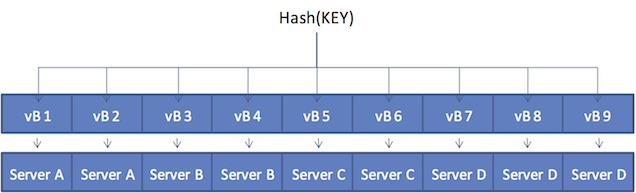

After some period of time, there is a need to add a server to the cluster. A new node, Server D is added to the cluster and the vBucket Map is updated.

The vBucket map is updated during therebalance operation; the updated map is then sent the cluster to all the cluster participants, including the other nodes, any connected “smart” clients, and the Moxi proxy service.

Within the new four-node cluster model, when a client again wants to get the

value of KEY, the hashing algorithm will still resolve to vBucket 8 ( vB8 ).

The new vBucket map however now maps that vBucket to Server D. The client now

communicates directly with Server D to obtain the information.

Caching Layer¶

The architecture of Couchbase Server includes a built-in caching layer. This caching layer acts as a central part of the server and provides very rapid reads and writes of data. Other database solutions read and write data from disk, which results in much slower performance. One alternative approach is to install and manage a caching layer as a separate component which will work with a database. This approach also has drawbacks because the burden of managing transfer of data between caching layer and database and the burden managing the caching layer results in significant custom code and effort.

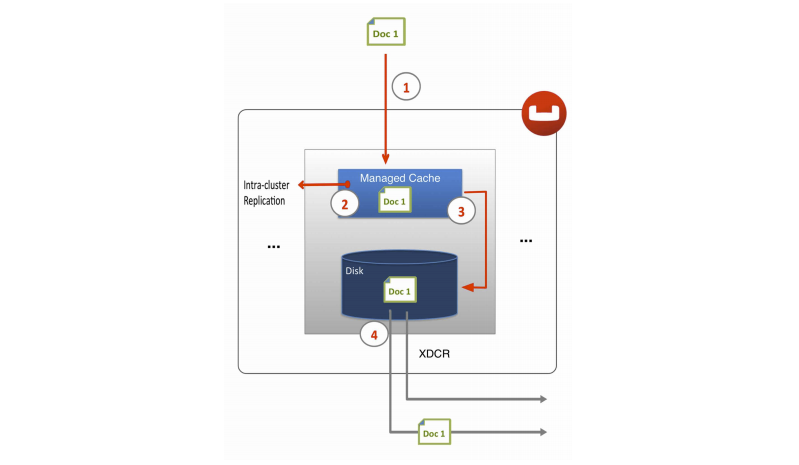

In contrast Couchbase Server automatically manages the caching layer and coordinates with disk space to ensure that enough cache space exists to maintain performance. Couchbase Server automatically places items that come into the caching layer into disk queue so that it can write these items to disk. If the server determines that a cached item is infrequently used, it can remove it from RAM to free space for other items. Similarly the server can retrieve infrequently-used items from disk and store them into the caching layer when the items are requested. So the entire process of managing data between the caching layer and data persistence layer is handled entirely by server. In order provide the most frequently-used data while maintaining high performance, Couchbase Server manages a working set of your entire information; this set consists of the all data you most frequently access and is kept in RAM for high performance.

Couchbase automatically moves data from RAM to disk asynchronously in the background in order to keep frequently used information in memory, and less frequently used data on disk. Couchbase constantly monitors the information accessed by clients, and decides how to keep the active data within the caching layer. Data is ejected to disk from memory in the background while the server continues to service active requests. During sequences of high writes to the database, clients will be notified that the server is temporarily out of memory until enough items have been ejected from memory to disk. The asynchronous nature and use of queues in this way enables reads and writes to be handled at a very fast rate, while removing the typical load and performance spikes that would otherwise cause a traditional RDBMS to produce erratic performance.

When the server stores data on disk and a client requests the data, it sends an

individual document ID then the server determines whether the information exists

or not. Couchbase Server does this with metadata structures. The metadata

holds information about each document in the database and this information is

held in RAM. This means that the server can always return a ‘document ID not

found’ response for an invalid document ID or it can immediately return the data

from RAM, or return it after it fetches it from disk.

Disk Storage¶

For performance, Couchbase Server mainly stores and retrieves information for clients using RAM. At the same time, Couchbase Server will eventually store all data to disk to provide a higher level of reliability. If a node fails and you lose all data in the caching layer, you can still recover items from disk. We call this process of disk storage eventual persistence since the server does not block a client while it writes to disk, rather it writes data to the caching layer and puts the data into a disk write queue to be persisted to disk. Disk persistence enables you to perform backup and restore operations, and enables you to grow your datasets larger than the built-in caching layer. For more information, see Ejection, Eviction and Working Set Management.

When the server identifies an item that needs to be loaded from disk because it is not in active memory, the process is handled by a background process that processes the load queue and reads the information back from disk and into memory. The client is made to wait until the data has been loaded back into memory before the information is returned.

Document Deletion from Disk

Couchbase Server will never delete entire items from disk unless a client explicitly deletes the item from the database or theexpiration value for the item is reached. The ejection mechanism removes an item from RAM, while keeping a copy of the key and metadata for that document in RAM and also keeping copy of that document on disk. For more information about document expiration and deletion, see Couchbase Developer Guide, About Document Expiration.

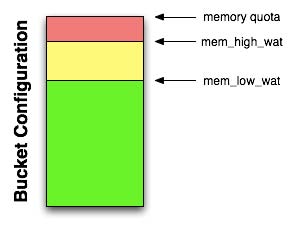

Ejection, Eviction and Working Set Management¶

Ejection is a process automatically performed by Couchbase Server; it is the process of removing data from RAM to provide room for frequently-used items. When Couchbase Server ejects information, it works in conjunction with the disk persistence system to ensure that data in RAM has been persisted to disk and can be safely retrieved back into RAM if the item is requested. The process that Couchbase Server performs to free space in RAM, and to ensure the most-used items are still available in RAM is also known as working set management.

In addition to memory quota for the caching layer, there are two watermarks the

engine will use to determine when it is necessary to start persisting more data

to disk. These are mem_low_wat and mem_high_wat.

As the caching layer becomes full of data, eventually the mem_low_wat is

passed. At this time, no action is taken. As data continues to load, it will

eventually reach mem_high_wat. At this point a background job is scheduled to

ensure items are migrated to disk and the memory is then available for other

Couchbase Server items. This job will run until measured memory reaches

mem_low_wat. If the rate of incoming items is faster than the migration of

items to disk, the system may return errors indicating there is not enough

space. This will continue until there is available memory. The process of

removing data from the caching to make way for the actively used information is

called ejection, and is controlled automatically through thresholds set on

each configured bucket in your Couchbase Server Cluster.

Some of you may be using only memcached buckets with Couchbase Server; in this case the server provides only a caching layer as storage and no data persistence on disk. If your server runs out of space in RAM, it will evict items from RAM on a least recently used basis (LRU). Eviction means the server will remove the key, metadata and all other data for the item from RAM. After eviction, the item is irretrievable.

For more detailed technical information about ejection and working set management, including any administrative tasks which impact this process, see Ejection and Working Set Management.

Expiration¶

Each document stored in the database has an optional expiration value (TTL, time to live). The default is for there to be no expiration, i.e. the information will be stored indefinitely. The expiration can be used for data that naturally has a limited life that you want to be automatically deleted from the entire database.

The expiration value is user-specified on a document basis at the point when the data is stored. The expiration can also be updated when the data is updated, or explicitly changed through the Couchbase protocol. The expiration time can either be specified as a relative time (for example, in 60 seconds), or absolute time (31st December 2012, 12:00pm).

Typical uses for an expiration value include web session data, where you want the actively stored information to be removed from the system if the user activity has stopped and not been explicitly deleted. The data will time out and be removed from the system, freeing up RAM and disk for more active data.

Server Warmup¶

Anytime you restart the Couchbase Server, or when you restore data to a server instance, the server must undergo a warmup process before it can handle requests for the data. During warmup the server loads data from disk into RAM; after the warmup process completes, the data is available for clients to read and write. Depending on the size and configuration of your system and the amount of data persisted in your system, server warmup may take some time to load all of the data into memory.

Couchbase Server 2.0 provides a more optimized warmup process; instead of loading data sequentially from disk into RAM, it divides the data to be loaded and handles it in multiple phases. Couchbase Server is also able to begin serving data before it has actually loaded all the keys and data from vBuckets. For more technical details about server warmup and how to manage server warmup, see Handling Server Warmup.

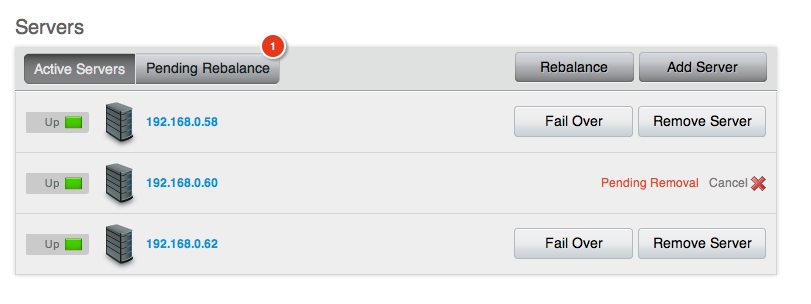

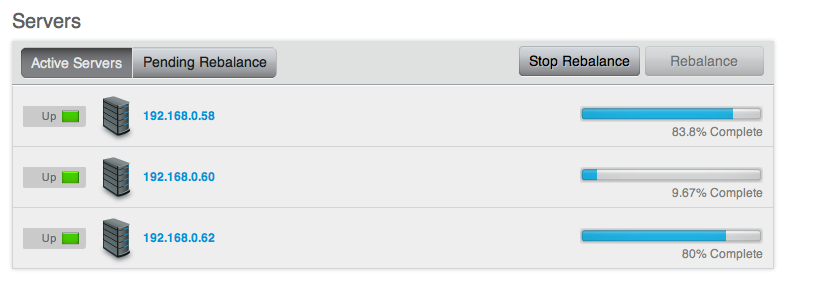

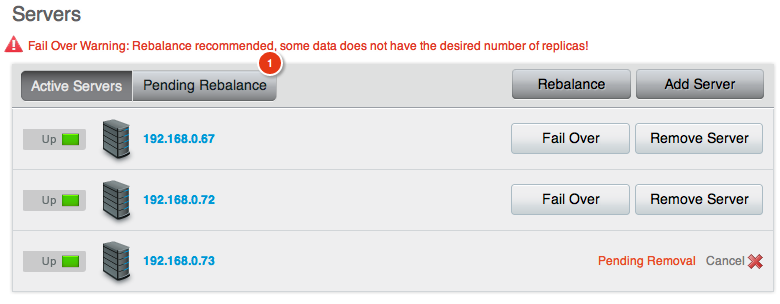

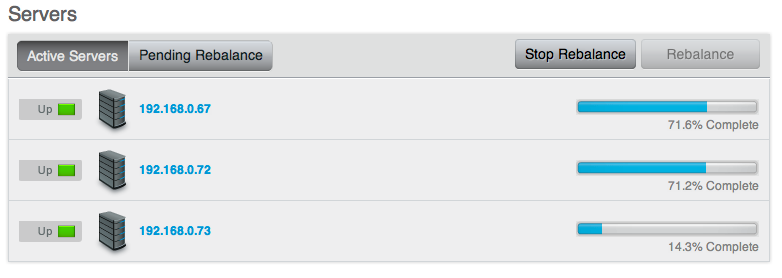

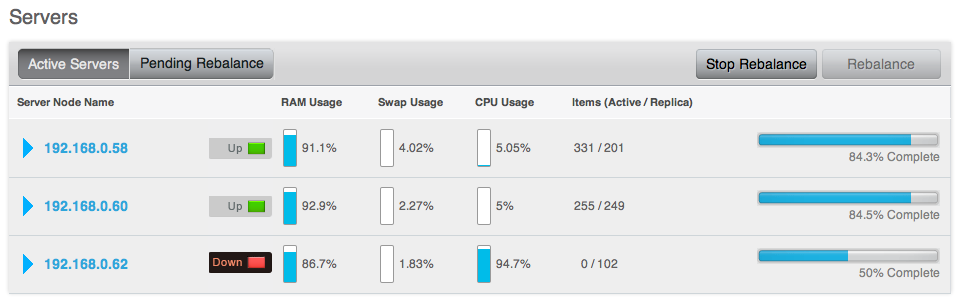

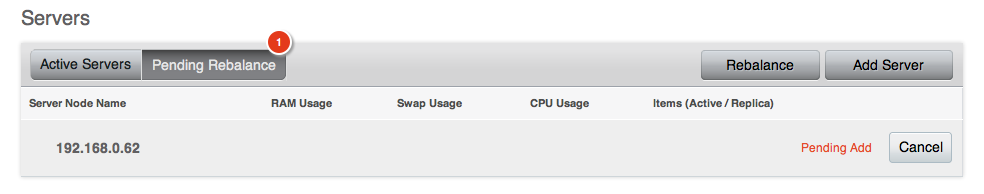

Rebalancing¶

The way data is stored within Couchbase Server is through the distribution

offered by the vBucket structure. If you want to expand or shrink your Couchbase

Server cluster then the information stored in the vBuckets needs to be

redistributed between the available nodes, with the corresponding vBucket map

updated to reflect the new structure. This process is called rebalancing.

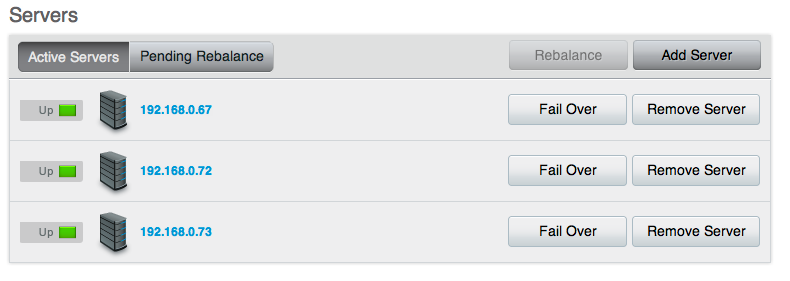

Rebalancing is an deliberate process that you need to initiate manually when the structure of your cluster changes. The rebalance process changes the allocation of the vBuckets used to store the information and then physically moves the data between the nodes to match the new structure.

The rebalancing process can take place while the cluster is running and servicing requests. Clients using the cluster read and write to the existing structure with the data being moved in the background between nodes. Once the moving process has been completed, the vBucket map is updated and communicated to the smart clients and the proxy service (Moxi).

The result is that the distribution of data across the cluster has been rebalanced, or smoothed out, so that the data is evenly distributed across the database, taking into account the data and replicas of the data required to support the system.

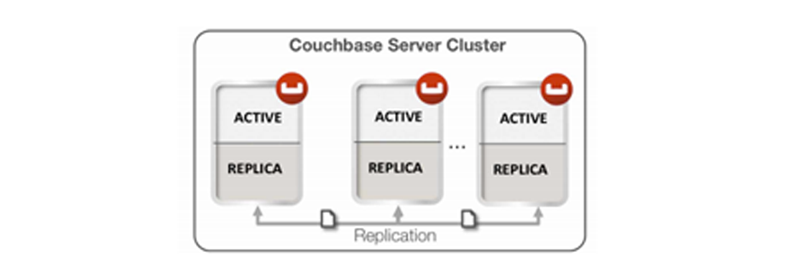

Replicas and Replication¶

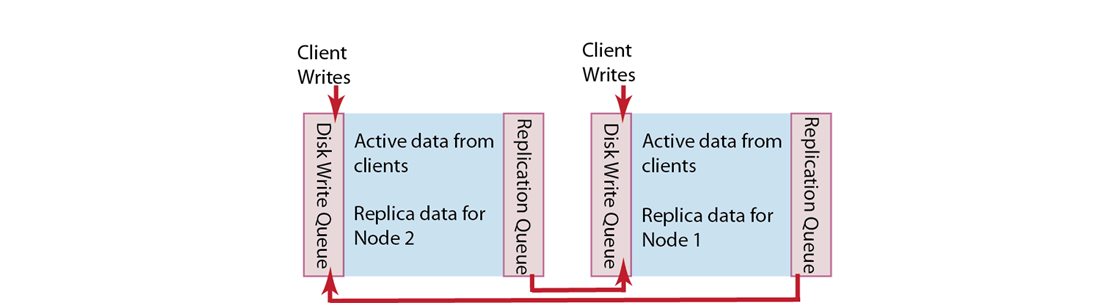

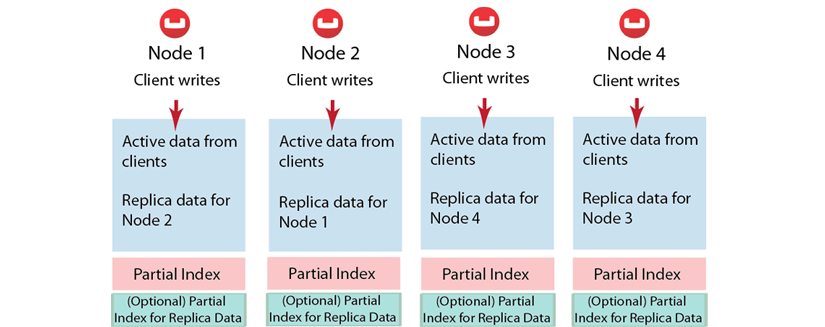

In addition to distributing information across the cluster for even data distribution and cluster performance, you can also establish replica vBuckets within a single Couchbase cluster.

A copy of data from one bucket, known as a source will be copied to a destination, which we also refer to as the replica, or replica vBucket. The node that contains the replica vBucket is also referred to as the replica node while the node containing original data to be replicated is called a source node. Distribution of replica data is handled in the same way as data at a source node; portions of replica data will be distributed around the cluster to prevent a single point of failure.

After Couchbase has stored replica data at a destination node, the data will also be placed in a queue to be persisted on disk at that destination node. For more technical details about data replication within Couchbase clusters, or to learn about any configurations for replication, see Handling Replication within a Cluster.

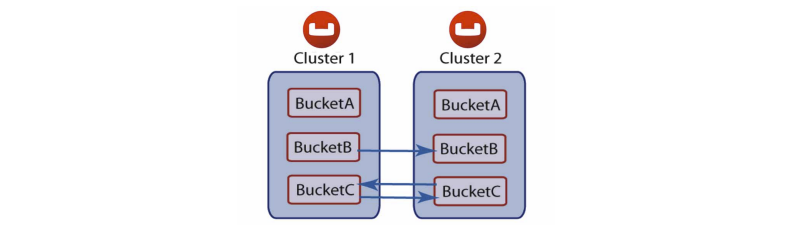

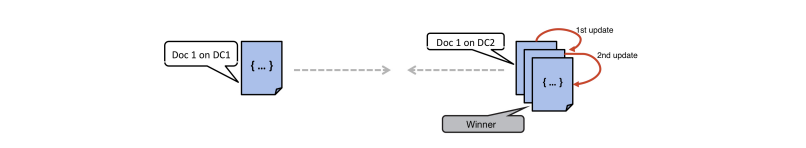

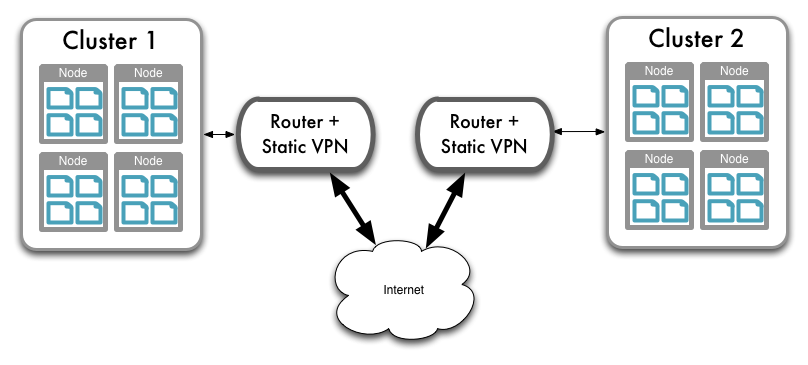

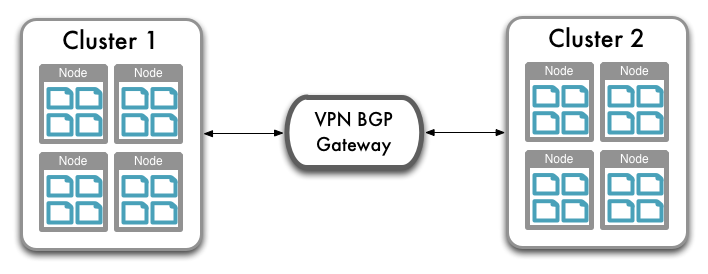

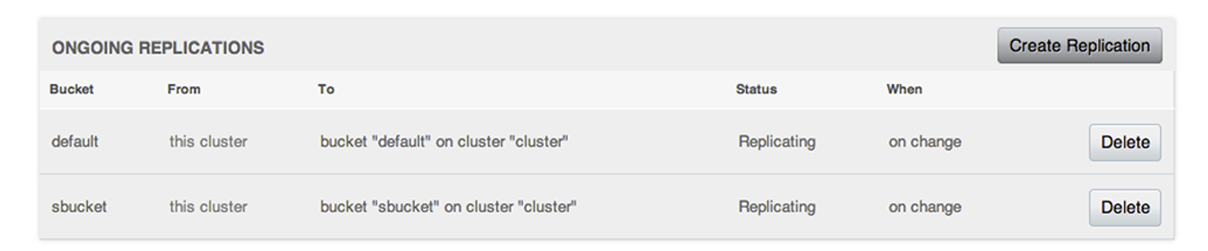

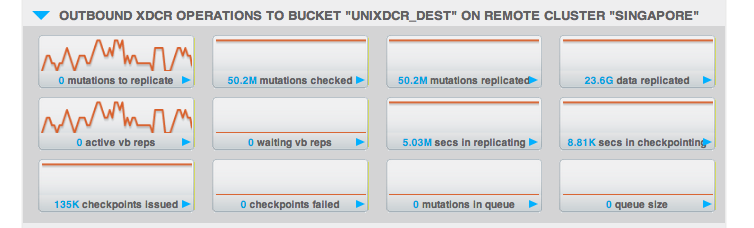

As of Couchbase Server 2.0, you are also able to perform replication between two Couchbase clusters. This is known as cross datacenter replication (XDCR) and can provide a copy of your data at a cluster which is closer to your users, or to provide the data in case of disaster recovery. For more information about replication between clusters via XDCR see Cross Datacenter Replication (XDCR).

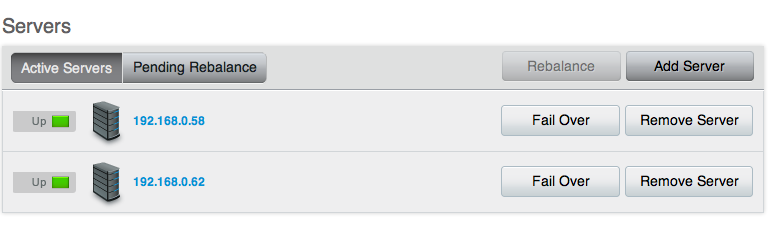

Failover¶

Information is distributed around a cluster using a series of replicas. For

Couchbase buckets you can configure the number of replicas (complete copies of

the data stored in the bucket) that should be kept within the Couchbase Server

Cluster.

In the event of a failure in a server (either due to transient failure, or for

administrative purposes), you can use a technique called failover to indicate

that a node within the Couchbase Cluster is no longer available, and that the

replica vBuckets for the server are enabled.

The failover process contacts each server that was acting as a replica and updates the internal table that maps client requests for documents to an available server.

Failover can be performed manually, or you can use the built-in automatic failover that reacts after a preset time when a node within the cluster becomes unavailable.

For more information, see Failing Over Nodes.

TAP¶

The TAP protocol is an internal part of the Couchbase Server system and is used in a number of different areas to exchange data throughout the system. TAP provides a stream of data of the changes that are occurring within the system.

TAP is used during replication, to copy data between vBuckets used for replicas. It is also used during the rebalance procedure to move data between vBuckets and redestribute the information across the system.

Client Interface¶

Within Couchbase Server, the techniques and systems used to get information into and out of the database differ according to the level and volume of data that you want to access. The different methods can be identified according to the base operations of Create, Retrieve, Update and Delete:

-

Create

Information is stored into the database using the memcached protocol interface to store a value against a specified key. Bulk operations for setting the key/value pairs of a large number of documents at the same time are available, and these are more efficient than multiple smaller requests.

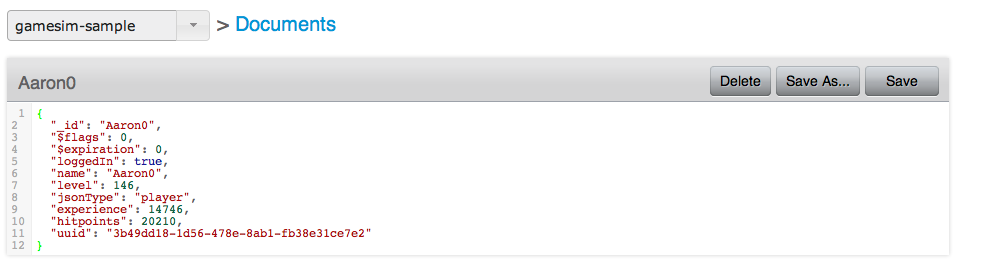

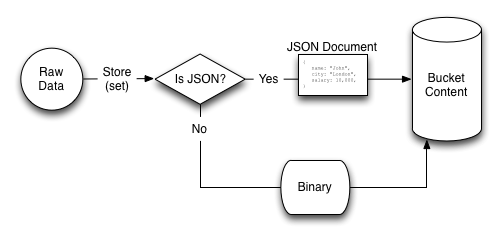

The value stored can be any binary value, including structured and unstructured strings, serialized objects (from the native client language), native binary data (for example, images or audio). For use with the Couchbase Server View engine, information must be stored using the JavaScript Object Notation (JSON) format, which structures information as a object with nested fields, arrays, and scalar datatypes.

-

Retrieve

To retrieve information from the database, there are two methods available:

-

By Key

If you know the key used to store a particular value, then you can use the memcached protocol (or an appropriate memcached compatible client-library) to retrieve the value stored against a specific key. You can also perform bulk operations

-

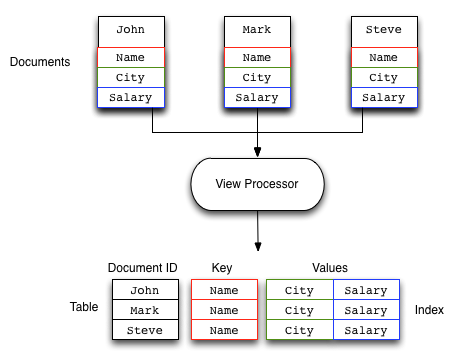

By View

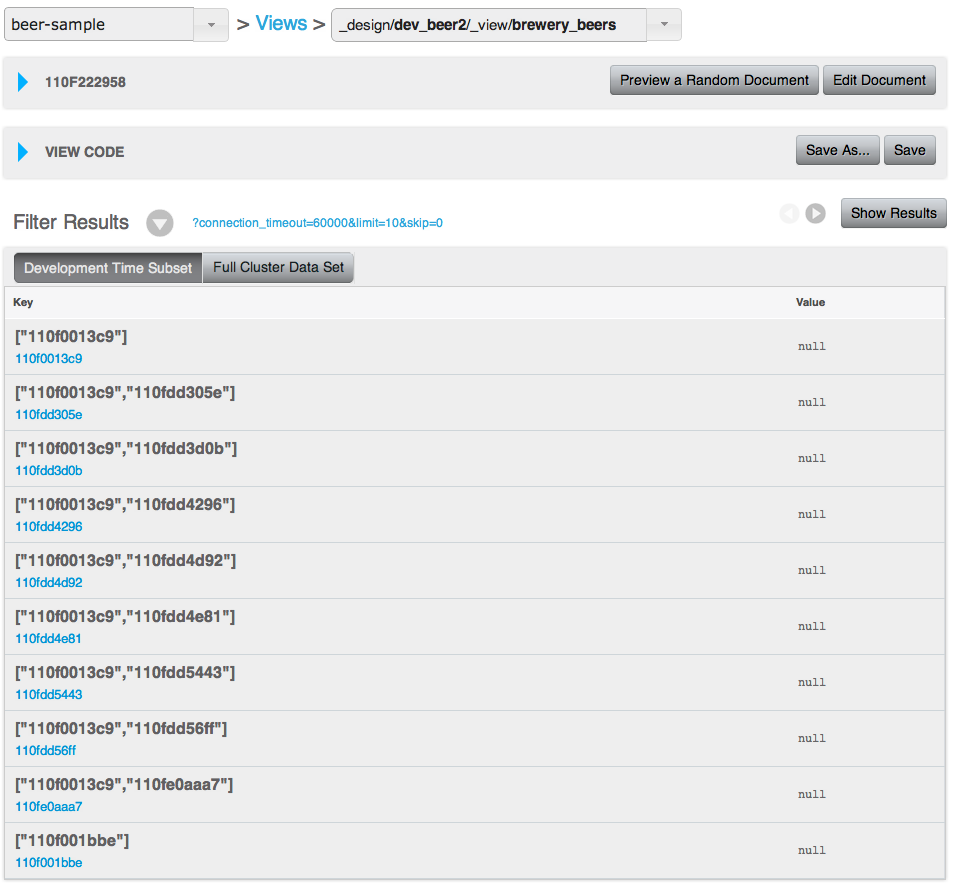

If you do not know the key, you can use the View system to write a view that outputs the information you need. The view generates one or more rows of information for each JSON object stored in the database. The view definition includes the keys (used to select specific or ranges of information) and values. For example, you could create a view on contact information that outputs the JSON record by the contact’s name, and with a value containing the contacts address. Each view also outputs the key used to store the original object. IF the view doesn’t contain the information you need, you can use the returned key with the memcached protocol to obtain the complete record.

-

-

Update

To update information in the database, you must use the memcached protocol interface. The memcached protocol includes functions to directly update the entire contents, and also to perform simple operations, such as appending information to the existing record, or incrementing and decrementing integer values.

-

Delete

To delete information from Couchbase Server you need to use the memcached protocol which includes an explicit delete command to remove a key/value pair from the server.

However, Couchbase Server also allows information to be stored in the database with an expiry value. The expiry value states when a key/value pair should be automatically deleted from the entire database, and can either be specified as a relative time (for example, in 60 seconds), or absolute time (31st December 2012, 12:00pm).

The methods of creating, updating and retrieving information are critical to the way you work with storing data in Couchbase Server.

Administration Tools¶

Couchbase Server was designed to be as easy to use as possible, and does not require constant attention. Administration is however offered in a number of different tools and systems. For a list of the most common administration tasks, see Administration Tasks.

Couchbase Server includes three solutions for managing and monitoring your Couchbase Server and cluster:

-

Web Administration Console

Couchbase Server includes a built-in web-administration console that provides a complete interface for configuring, managing, and monitoring your Couchbase Server installation.

For more information, see Using the Web Console.

-

Administration REST API

In addition to the Web Administration console, Couchbase Server incorporates a management interface exposed through the standard HTTP REST protocol. This REST interface can be called from your own custom management and administration scripts to support different operations.

Full details are provided in Using the REST API

-

Command Line Interface

Couchbase Server includes a suite of command-line tools that provide information and control over your Couchbase Server and cluster installation. These can be used in combination with your own scripts and management procedures to provide additional functionality, such as automated failover, backups and other procedures. The command-line tools make use of the REST API.

For information on the command-line tools available, see Command-line Interface for Administration.

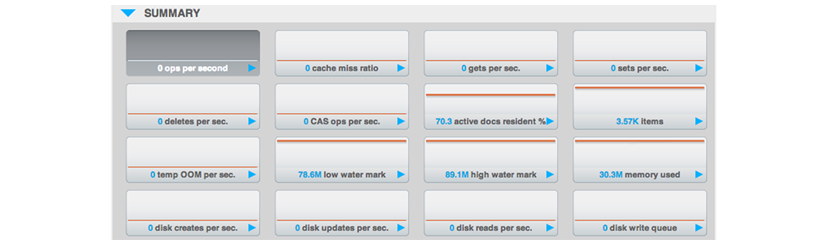

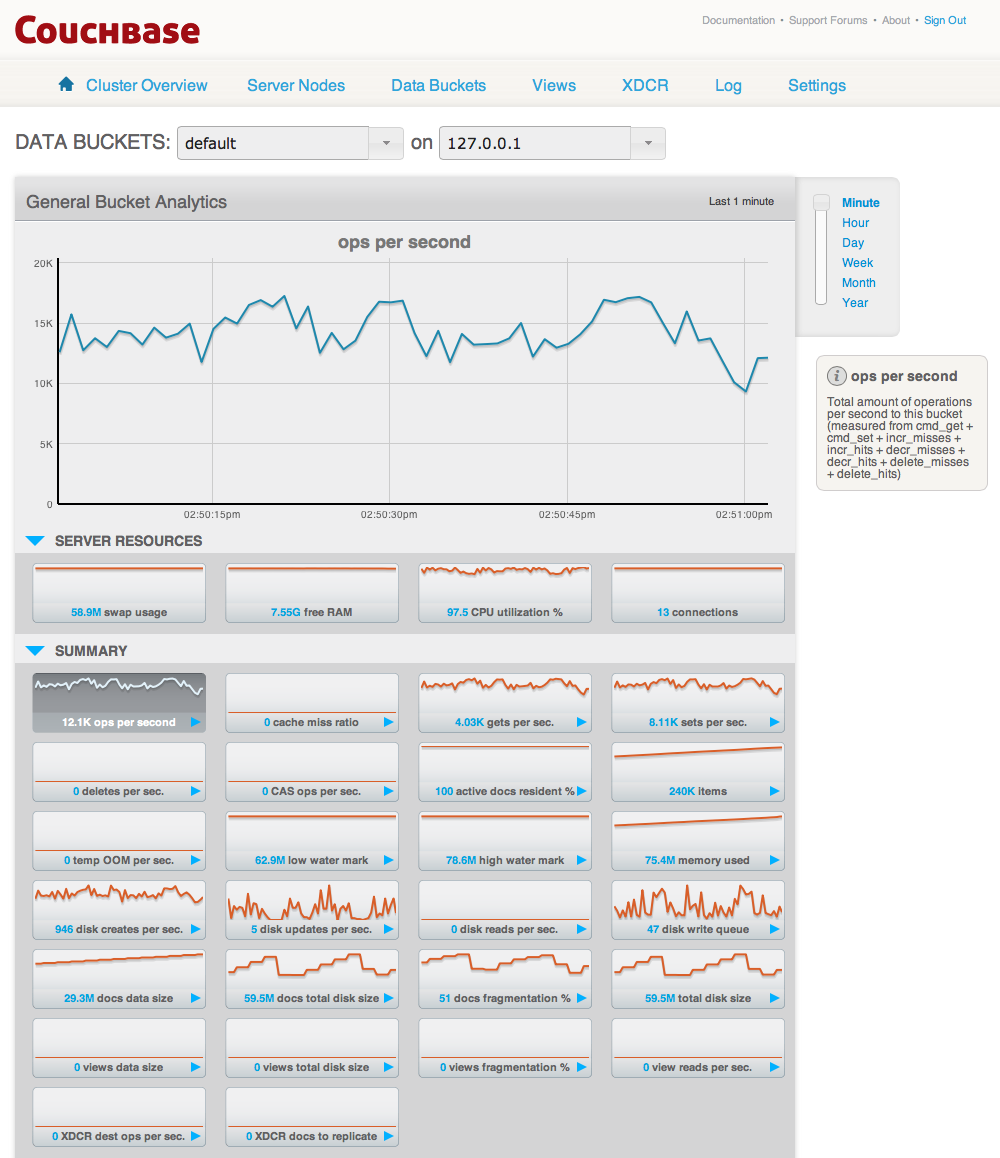

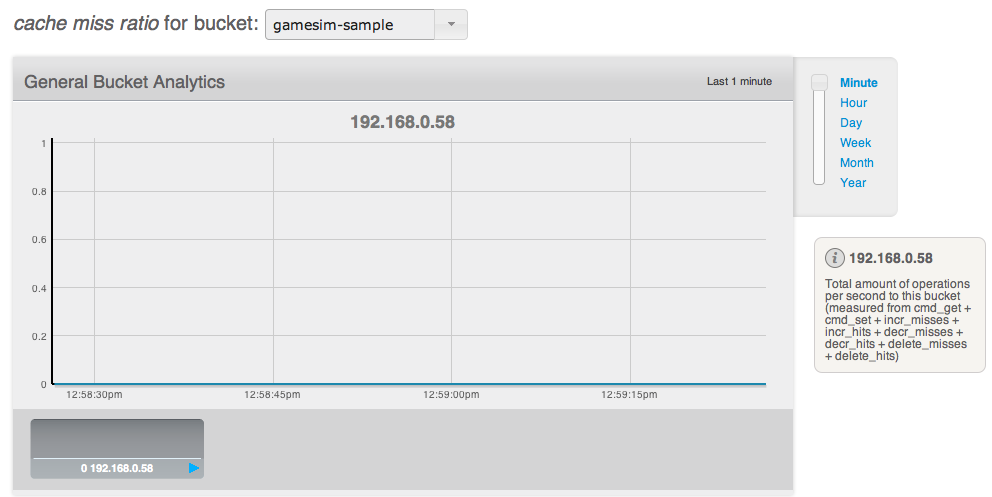

Statistics and Monitoring¶

In order to understand what your cluster is doing and how it is performing, Couchbase Server incorporates a complete set of statistical and monitoring information. The statistics are provided through all of the administration interfaces. Within the Web Administration Console, a complete suite of statistics are provided, including built-in real-time graphing and performance data.

The statistics are divided into a number of groups, allowing you to identify different states and performance information within your cluster:

-

By Node

Node statistics show CPU, RAM and I/O numbers on each of the servers and across your cluster as a whole. This information can be used to help identify performance and loading issues on a single server.

-

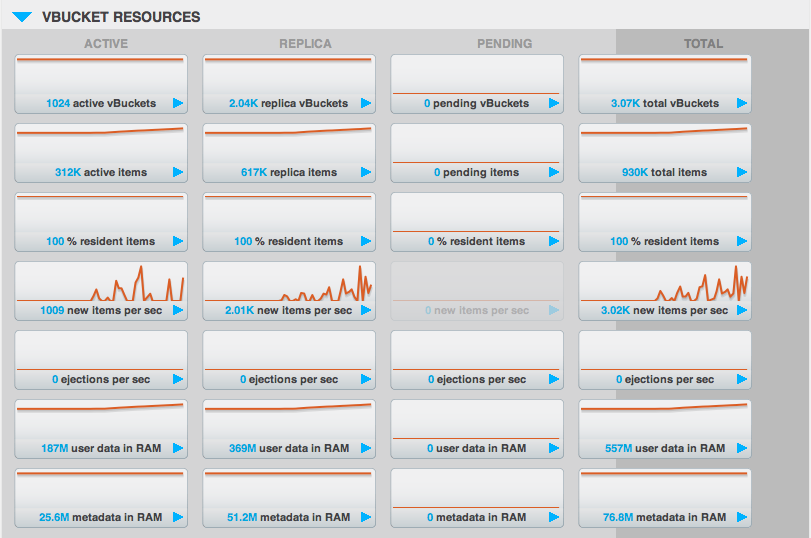

By vBucket

The vBucket statistics show the usage and performance numbers for the vBuckets used to store information in the cluster. These numbers are useful to determine whether you need to reconfigure your buckets or add servers to improve performance.

-

By View

View statistics display information about individual views in your system, including the CPU usage and disk space used so that you can monitor the effects and loading of a view on your Couchbase nodes. This information may indicate that your views need modification or optimization, or that you need to consider defining views across multiple design documents.

-

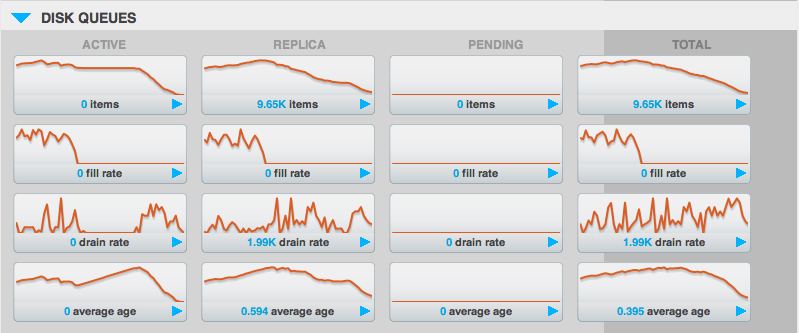

By Disk Queues

These statistics monitor the queues used to read and write information to disk and between replicas. This information can be helpful in determining whether you should expand your cluster to reduce disk load.

-

By TAP Queues

The TAP interface is used to monitor changes and updates to the database. TAP is used internally by Couchbase to provide replication between Couchbase nodes, but can also be used by clients for change notifications.

In nearly all cases the statistics can be viewed both on a whole of cluster basis, so that you can monitor the overall RAM or disk usage for a given bucket, or an individual server basis so that you can identify issues within a single machine.

Migration to Couchbase¶

Couchbase Server is based on components from both Membase Server and CouchDB. If you are a user of these database systems, or are migrating from these to Couchbase Server, the following information may help in translating your understanding of the main concepts and terms.

Migrating for Membase Users¶

For an existing Membase user the primary methods for creating, adding, manipulating and retrieving data remain the same. In addition, the background operational elements of your Couchbase Server deployment will not differ from the basic running of a Membase cluster.

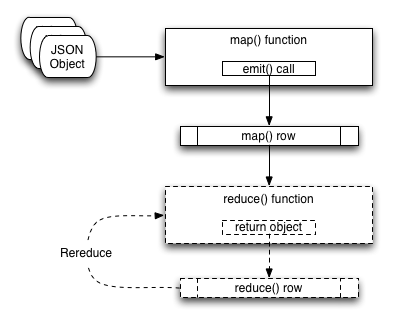

-

Term and Concept Differences

The following terms are new, or updated, in Couchbase Server:

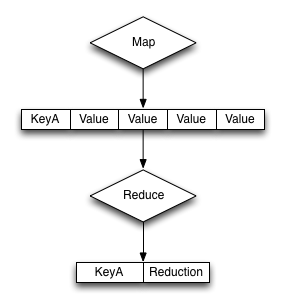

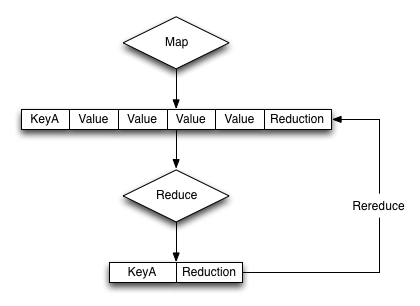

Views, and the associated terms of themapandreducefunctions used to define views. Views provide an alternative method for accessing and querying information stored in key/value pairs within Couchbase Server. Views allow you to query and retrieve information based on the values of the contents of a key/value pair, providing the information has been stored in JSON format.JSON (JavaScript Object Notation), a data representation format that is required to store the information in a format that can be parsed by the View system is new.

Membase Server is now Couchbase Server.

Membase Buckets are now Couchbase Buckets.

-

Consistent Functionality

The core functionality of Membase, including the methods for basic creation, updating and retrieval of information all remain identical within Couchbase Server. You can continue to use the same client protocols for setting and retrieving information.

The administration, deployment, and core of the web console and administration interfaces are also identical. There are updates and improvements to support additional functionality which is included in existing tools. These include View-related statistics, and an update to the Web Administration Console for building and defining views.

-

Changed Functionality

The main difference of Couchbase Server is that in addition to the key/value data store nature of the database, you can also use Views to convert the information from individual objects in your database into lists or tables of records and information. Through the view system, you can also query data from the database based on the value (or fragment of a value) of the information that you have stored in the database against a key.

This fundamental differences means that applications no longer need to manually manage the concept of lists or sets of data by using other keys as a lookup or compounding values.

-

Operational and Deployment Differences

The main components of the operation and deployment of your Couchbase Server remain the same as with Membase Server. You can add new nodes, failover, rebalance and otherwise manage your nodes as normal.

However, the introduction of Views means that you will need to monitor and control the design documents and views that are created alongside your bucket configurations. Indexes are generated for each design document (i.e. multiple views), and for optimum reliability you may want to backup the generated index information to reduce the time to bring up a node in the event of a failure, as building a view from raw data on large datasets may take a significant amount of time.

In addition, you will need to understand how to recreate and rebuild View data, and how to compact and clean-up view information to help reduce disk space consumption and response times.

-

Client and Application Changes

Clients can continue to communicate with Couchbase Server using the existing memcached protocol interface for the basic create, retrieve, update and delete operations for key/value pairs. However, to access the View functionality you must use a client library that supports the view API (which uses HTTP REST).

To build Views that can output and query your stored data, your objects must be stored in the database using the JSON format. This may mean that if you have been using the native serialisation of your client library to convert a language specific object so that it can be stored into Membase Server, you will now need to structure your data and use a native to JSON serialization solution, or reformat your data so that it can be formated as JSON.

Migrating for CouchDB Users¶

Although Couchbase Server incorporates the view engine functionality built into CouchDB, the bulk of the rest of the functionality is supported through the components and systems of Membase Server.

This change introduces a number of significant differences for CouchDB users that want to use Couchbase Server, particularly when migrating existing applications. However, you also gain the scalability and performance advantages of the Membase Server components.

-

Term and Concept Differences

Within CouchDB information is stored into the database using the concept of a document ID (either explicit or automatically generated), against which the document (JSON) is stored. Within Couchbase, there is no document ID, instead information is stored in the form of a key/value pair, where the key is equivalent to the document ID, and the value is equivalent to the document. The format of the data is the same.

Almost all of the HTTP REST API that makes up the interface for communicating with CouchDB does not exist within Couchbase Server. The basic document operations for creating, retrieving, updating and deleting information are entirely supported by the memcached protocol.

Also, beyond views, many of the other operations are unsupported at the client level within CouchDB. For example, you cannot create a new database as a client, store attachments, or perform administration-style functions, such as view compaction.

Couchbase Server does not support the notion of databases, instead information is stored within logical containers called Buckets. These are logically equivalent and can be used to compartmentalize information according to projects or needs. With Buckets you get the additional capability to determine the number of replicas of the information, and the port and authentication required to access the information.

-

Consistent Functionality

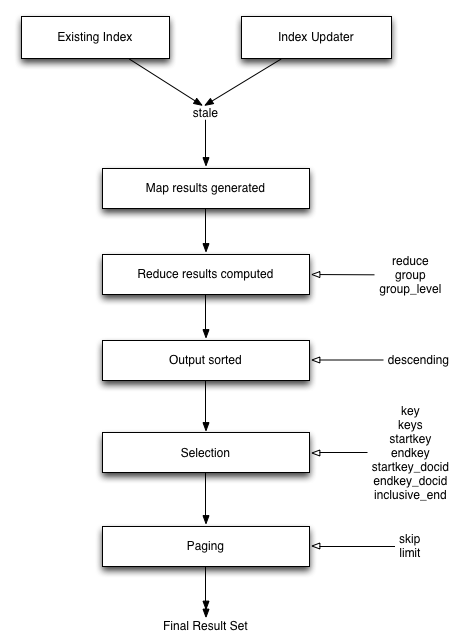

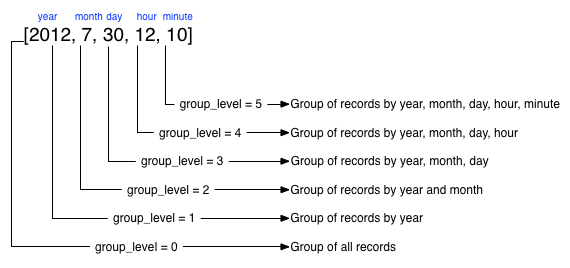

The operation and interface for querying and creating view definitions in Couchbase Server is mostly identical. Views are still based on the combination of a map/reduce function, and you should be able to port your map/reduce definitions to Couchbase Server without any issues. The main difference is that the view does not output the document ID, but, as previously noted, outputs the key against which the key/value was stored into the database.

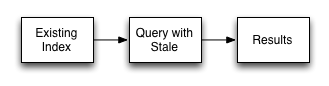

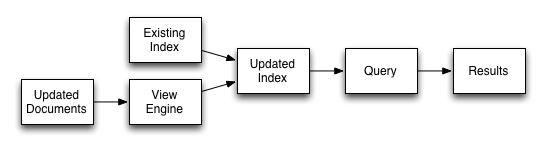

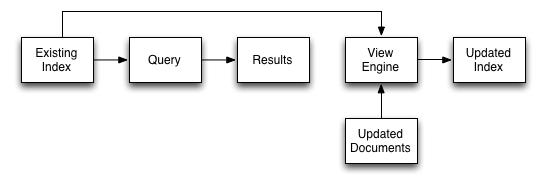

Querying views is also the same, and you use the same arguments to the query, such as a start and end docids, returned row counts and query value specification, including the requirement to express your key in the form of a JSON value if you are using compound (array or hash) types in your view key specification. Stale views are also supported, and just as with CouchDB, accessing a stale view prevents Couchbase Server from updating the index.

-

Changed Functionality

There are many changes in the functionality and operation of Couchbase Server than CouchDB, including:

Basic data storage operations must use the memcached API.

Explicit replication is unsupported. Replication between nodes within a cluster is automatically configured and enabled and is used to help distribute information around the cluster.

You cannot replicate between a CouchDB database and Couchbase Server.

Explicit attachments are unsupported, but you can store additional files as new key/value pairs into the database.

CouchApps are unsupported.

Update handlers, document validation functions, and filters are not supported.

Futon does not exist, instead there is an entire Web Administration Console built into Couchbase Server that provides cluster configuration, monitoring and view/document update functionality.

-

Operational and Deployment Differences

From a practical level the major difference between CouchDB and Couchbase Server is that options for clustering and distribution of information are significantly different. With CouchDB you would need to handle the replication of information between multiple nodes and then use a proxy service to distribute the load from clients over multiple machines.

With Couchbase Server, the distribution of information is automatic within the cluster, and any Couchbase Server client library will automatically handle and redirect queries to the server that holds the information as it is distributed around the cluster. This process is automatic.

-

Client and Application Changes

As your CouchDB based application already uses JSON for the document information, and a document ID to identify each document, the bulk of your application logic and view support remain identical. However, the HTTP REST API for basic CRUD operations must be updated to use the memcached protocol.

Additionally, because CouchApps are unsupported you will need to develop a client side application to support any application logic.

Installing and Upgrading¶

To start using Couchbase Server, you need to follow these steps:

Make sure your machine meets the system requirements. See Preparation.

Install Couchbase Server. See Installing Couchbase Server.

For more information on Upgrading Couchbase Server from a previous version, see Upgrading to Couchbase Server 2.0.x.

Test the installation by connecting and storing some data using the native Memcached protocol. See Testing Couchbase Server.

Setup the new Couchbase Server system by completing the web-based setup instructions. See Initial Server Setup.

Preparation¶

Mixed deployments, meaning deployments with both Linux and Windows server nodes are not supported at this time. When you deploy to multiple operating systems you should use same operating system on all machines.

A Couchbase cluster with nodes on different operating systems will not function with one another due to differences in the number of shards. Therefore using multiple platforms within a cluster is not a supported configuration.

Due to limitations within the Mac OS X operating system, the Mac OS X implementation is incompatible with other operating systems. It is not possible either to mix operating systems within the same cluster, or configure XDCR between a Mac OS X and Windows or Linux cluster.

Your system should meet or exceed the following system requirements.

Supported Platforms¶

RedHat Enterprise Linux 5 and 6 (32-bit and 64-bit) – RedHat 5.8 recommended

-

CentOS 5 and 6 (32-bit and 64-bit) – 5.8 recommended. Later CentOS versions may work, but are unsupported. You may need to install a specific OpenSSL dependency by running:

root-shell> yum install openssl098e Ubuntu Linux 10.04 (32-bit and 64-bit) for production

Ubuntu Linux 12.04 (32-bit and 64-bit) for production. Recommended.

-

Ubuntu Linux 13.04 may work, but is unsupported. You may need to install a specific OpenSSL dependency by running:

root-shell> apt-get install libssl0.9.8### About SELinux

Couchbase Server does not currently operate when SELinux is enabled. You should disable SELinux on each node in the cluster to prevent problems with the operation of Couchbase Server. For more information on disable SELinux, see How to Disable SELinux.

Windows Server 2008 R2 with Service Pack 1 (64-bit). You must upgrade your Windows Server 2008 R2 installation with Service Pack 1 installed before running Couchbase Server. You can obtain Service Pack 1 from Microsoft TechNet.

Windows 7 (64-bit).

Mac OS X 10.7 and 10.8 (64-bit only) for Developer Only. Not supported in production. Couchbase Server on Mac OSX uses 64 vBuckets as opposed to the 1024 vBuckets used by other platforms. Due to this difference, if you need to move data between a Mac OS X cluster and a cluster hosted on another platform, please use

cbbackupandcbrestore. For more information, see Backup and Restore Between Mac OS X and Other Platforms.

Hardware Requirements¶

The following hardware requirements are recommended for installation:

Quad-core for key-value store, 64-bit CPU running at 3GHz

Six cores if you use XDCR and views.

16GB RAM (physical)

Block-based storage device (hard disk, SSD, EBS, iSCSI). Network filesystems (e.g. CIFS, NFS) are not supported.

A minimum specification machine should have the following characteristics:

Dual-core CPU running at 2GHz for key-value store

4GB RAM (physical)

For development and testing purposes a reduced CPU and RAM than the minimum specified can be used. This can be as low as 1GB of free RAM beyond operating system requirements and a single CPU core.

However, you should not use a configuration lower than that specified above in production. Performance on machines lower than the minimum specification will be significantly lower and should not be used as an indication of the performance on a production machine.

View performance on machines with less than 2 CPU cores will be significantly reduced.

You must have enough memory to run your operating system and the memory reserved for use by Couchbase Server. For example, if you want to dedicate 8GB of RAM to Couchbase Server you must have enough RAM to host your operating system. If you are running additional applications and servers, you will need additional RAM. For smaller systems, such as those with less than 16GB you should for instance you should allocate at least 40% of RAM to your operating system.

Storage Requirements¶

You must have the following storage available:

1GB for application logging

At least twice the disk space to match your physical RAM for persistence of information

Supported Web Browsers¶

The Couchbase Web Console runs on the following browsers, with Javascript support enabled:

-

Mozilla Firefox 3.6 or higher

To enable JavaScript, select the

Enable JavaScriptoption within theContentpanel of the application preferences. -

Safari 5 or higher

To enable JavaScript, use the checkbox on the security tab of the application preferences.

-

Google Chrome 11 or higher

To enable JavaScript, use the

Allow all sites to run JavaScript (recommended)option within theContentbutton of theUnder the Hoodsection of the application preferences. -

Internet Explorer 8 or higher

To enable JavaScript, by enabling

Active Scriptingwithin theCustom Level, section of theSecuritysection of theInternet Optionsitem of theToolsmenu.

Network Ports¶

Couchbase Server uses a number of different network ports for communication between the different components of the server, and for communicating with clients that accessing the data stored in the Couchbase cluster. The ports listed must be available on the host for Couchbase Server to run and operate correctly. Couchbase Server will configure these ports automatically, but you must ensure that your firewall or IP tables configuration allow communication on the specified ports for each usage type. On Linux the installer will notify you that you need to open these ports.

The following table lists the ports used for different types of communication with Couchbase Server, as follows:

-

Node to Node

Where noted, these ports are used by Couchbase Server for communication between all nodes within the cluster. You must have these ports open on all to enable nodes to communicate with each other.

-

Node to Client

Where noted, these ports should be open between each node within the cluster and any client nodes accessing data within the cluster.

-

Cluster Administration

Where noted, these ports should be open and accessible to allow administration, whether using the REST API, command-line clients, and Web browser.

-

XDCR

Ports are used for XDCR communication between all nodes in both the source and destination clusters.

| Port | Description | Node to Node | Node to Client | Cluster Administration | XDCR |

|---|---|---|---|---|---|

| 8091 | Web Administration Port | Yes | Yes | Yes | Yes |

| 8092 | Couchbase API Port | Yes | Yes | No | Yes |

| 11209 | Internal Cluster Port | Yes | No | No | No |

| 11210 | Internal Cluster Port | Yes | Yes | No | No |

| 11211 | Client interface (proxy) | Yes | Yes | No | No |

| 4369 | Erlang Port Mapper ( epmd ) |

Yes | No | No | No |

| 21100 to 21199 (inclusive) | Node data exchange | Yes | No | No | No |

Installing Couchbase Server¶

To install Couchbase Server on your machine you must download the appropriate package for your chosen platform from http://www.couchbase.com/downloads. For each platform, follow the corresponding platform-specific instructions.

If you are installing Couchbase Server on to a machine that has previously had Couchbase Server installed and you do not want to perform an upgrade installation, you must remove Couchbase Server and any associated data from your machine before you start the installation. For more information on uninstalling Couchbase Server, see Uninstalling Couchbase Server.

To perform an upgrade installation while retaining your existing dataset, see Upgrading to Couchbase Server 2.0.x.

Red Hat Linux Installation¶

The RedHat installation uses the RPM package. Installation is supported on RedHat and RedHat based operating systems such as CentOS.

To install, use the rpm command-line tool with the RPM package that you

downloaded. You must be logged in as root (Superuser) to complete the

installation:

root-shell> rpm --install couchbase-server version.rpm

Where version is the version number of the downloaded package.

Once the rpm command completes, Couchbase Server starts automatically, and is

configured to automatically start during boot under the 2, 3, 4, and 5

runlevels. Refer to the RedHat RPM documentation for more information about

installing packages using RPM.

Once installation finishes, the installation process will display a message similar to that below:

Minimum RAM required : 4 GB

System RAM configured : 8174464 kB

Minimum number of processors required : 4 cores

Number of processors on the system : 4 cores

Starting couchbase-server[ OK ]

You have successfully installed Couchbase Server.

Please browse to http://host_name:8091/ to configure your server.

Please refer to http://couchbase.com for additional resources.

Please note that you have to update your firewall configuration to

allow connections to the following ports: 11211, 11210, 11209, 4369,

8091, 8092 and from 21100 to 21299.

By using this software you agree to the End User License Agreement.

See /opt/couchbase/LICENSE.txt.

Once installed, you can use the RedHat chkconfig command to manage the

Couchbase Server service, including checking the current status and creating the

links to enable and disable automatic start-up. Refer to the RedHat

documentation

for instructions.

To continue installation you open a web browser and access the Couchbase Web Console. See Initial Server Setup.

Ubuntu Linux Installation¶

The Ubuntu installation uses the DEB package. To install, use the dpkg

command-line tool using the DEB file that you downloaded. The following example

uses sudo which will require root-access to allow installation:

shell> dpkg -i couchbase-server version.deb

Where version is the version number of the downloaded package.

Once the dpkg command has been executed, the Couchbase server starts

automatically, and is configured to automatically start during boot under the 2,

3, 4, and 5 runlevels. Refer to the Ubuntu documentation for more information

about installing packages using the Debian package manager.

Once installation has completed, the installation process will display a message similar to that below:

Selecting previously deselected package couchbase-server.

(Reading database ... 73755 files and directories currently installed.)

Unpacking couchbase-server (from couchbase-server_x86_64_2.x.x-xxx-rel.deb) ...

libssl0.9.8 is installed. Continue installing

Minimum RAM required : 4 GB

System RAM configured : 4058708 kB

Minimum number of processors required : 4 cores

Number of processors on the system : 4 cores

Setting up couchbase-server (2.0.x) ...

* Started couchbase-server

You have successfully installed Couchbase Server.

Please browse to http://slv-0501:8091/ to configure your server.

Please refer to http://couchbase.com for additional resources.

Please note that you have to update your firewall configuration to

allow connections to the following ports: 11211, 11210, 11209, 4369,

8091, 8092 and from 21100 to 21299.

By using this software you agree to the End User License Agreement.

See /opt/couchbase/LICENSE.txt.

Processing triggers for ureadahead ...

ureadahead will be reprofiled on next reboot

Once installed, you can use the service command to manage the Couchbase Server

service, including checking the current status. Refer to the Ubuntu

documentation for instructions. To continue installation you must open a web

browser and access the web administration interface. See Initial Server

Setup.

Microsoft Windows Installation¶

To install on Windows, download the Windows installer package. This is supplied as a Windows executable. You can install the package either using the wizard, or by doing an unattended installation process. In either case make sure that you have no anti-virus software running on the machine before you start the installation process. You also need administrator privileges on the machine where you install it.

Port Exhaustion on Windows¶

The TCP/IP port allocation on Windows by default includes a restricted number of ports available for client communication. For more information on this issue, including information on how to adjust the configuration and increase the available ports, see MSDN: Avoiding TCP/IP Port Exhaustion.

Couchbase Server uses the Microsoft C++ redistributable package, which will automatically download for you during installation. However, if another application on your machine is already using the package, your installation process may fail. To ensure that your installation process completes successfully, shut down all other running applications during installation.

Installation Wizard

-

Double click on the downloaded executable file.

The installer for windows will detect if any redistributable packages included with Couchbase need to be installed or not. If these packaged are not already on your system, the install will automatically install them along with Couchbase Server.

-

Follow the install wizard to complete the installation.

You will be prompted with the

Installation Locationscreen. You can change the location where the Couchbase Server application is located. Note that this does not configure the location of where the persistent data will be stored, only the location of the server itself.The install will copy the necessary files to the system. During the installation process, the installer will also check to ensure that the default administration port is not already in use by another application. If the default port is unavailable, the installer will prompt for a different port to be used for administration of the Couchbase server.

Important

If the Windows installer hangs on the Computing Space Requirements screen, there is an issue with your setup or installation environment, for example, other running applications.

Workaround:

- Stop any other running browers and applications when you started installing Couchbase.

- Kill the installation process and uninstall the failed setup.

- Delete or rename the temp location under C:\Users\[logonuser]\AppData\Temp

- Reboot and try again.

After installation you should follow the server setup instructions. See Initial Server Setup.

Unattended Installation

To use the unattended installation process, you first record your installation settings in wizard installation. These settings are saved to a file. You can use this file to silently install other nodes of the same version.

To record your install options, open a Command Terminal or Powershell and start

the installation executable with the /r command-line option:

shell> couchbase_server_version.exe /r /f1your_file_name.iss

You will be prompted with installation options, and the wizard will complete the

server install. A file with your options will be recorded at

C:\Windows\your_file_name.iss.

To perform an installation using this recorded setup file, copy the

your_file_name.iss file into the same directory as the installer executable.

Run the installer from the command-line using the /s option:

shell> couchbase_server_version.exe /s -f1your_file_name.iss

You can repeat this process on multiple machines by copying the install package

and the your_file_name.iss file to the same directory on each machine.

Mac OS X Installation¶

Couchbase Server on Mac OS X is for development purposes only.

Please use the default archive file hander in Mac OS X, Archive Utility, when you unpack the Couchbase Server distribution. It is more difficult to diagnose non-functioning or damaged installations after extraction by other third party archive extraction tools.

The Mac OS X installation uses a Zip file which contains a standalone

application that can be copied to the Applications folder or to any other

location you choose. The installation location is not the same as the location

of the Couchbase data files.

Due to limitations within the Mac OS X operating system, the Mac OS X implementation is incompatible with other operating systems. It is not possible either to mix operating systems within the same cluster, or configure XDCR between a Mac OS X and Windows or Linux cluster.

If you need to move data between a Mac OS X cluster and a cluster hosted on

another platform, please use cbbackup and cbrestore. For more information,

see Backup and Restore Between Mac OS X and Other

Platforms.

To install:

Delete any previous installs of Couchbase Server at the command line or by dragging the icon to the Trash can.

-

Remove remaining files from previous installations:

> rm -rf ~/Library/Application\ Support/Couchbase > rm -rf ~/Library/Application\ Support/Membase Download the Mac OS X Zip file.

Double-click the downloaded Zip installation file to extract the server. This will create a single folder, the

Couchbase Server.appapplication.Drag and Drop

Couchbase Server.appto your chosen installation folder, such as the systemApplicationsfolder.

Once the install completes, you can double-click on Couchbase Server.app to

start it. The Couchbase Server icon appears in the menu bar on the right-hand

side. If you have not yet configured your server, then the Couchbase Web Console

opens and you should to complete the Couchbase Server setup process. See

Initial Server Setup for more details.

The Couchbase application runs as a background application. If you click on the icon in the menu bar you see a list of operations that can be performed.

The command line tools are included in the Couchbase Server application

directory. You can access them in Terminal by using the full path of the

Couchbase Server installation. By default, this is

/Applications/Couchbase Server.app/Contents/Resources/couchbase-core/bin/.

Initial Server Setup¶

We recommend that you clear your browser cache before doing the setup process. You can find notes and tips on how to do this on different browsers and platforms on this page.

On all platforms you can access the web console by connecting to the embedded

web server on port 8091. For example, if your server can be identified on your

network as servera, you can access the web console by opening

http://servera:8091/. You can also use an IP address or, if you are on the

same machine, http://localhost:8091. If you set up Couchbase Server on another

port other than 8091, go to that port.

Open Couchbase Web Console.

-

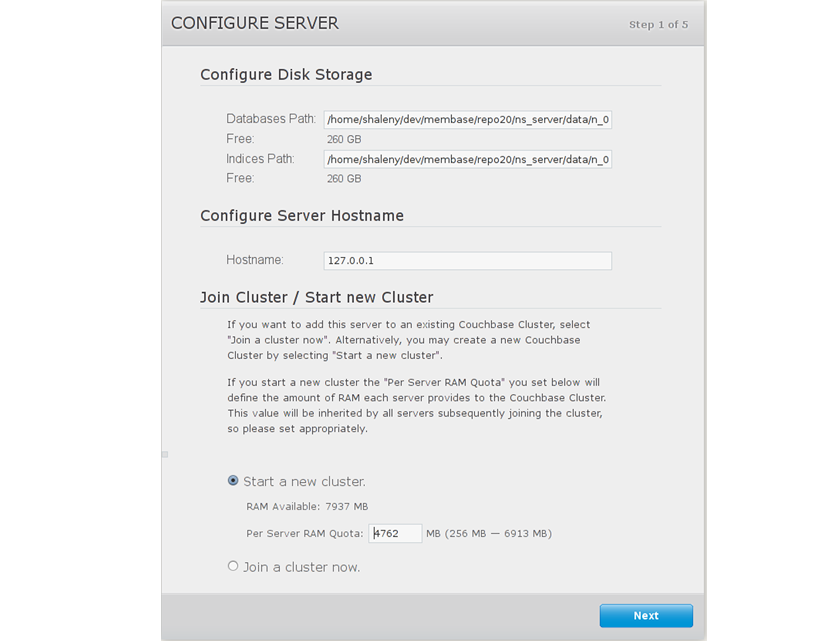

Set the disk storage and cluster configuration.

The

Configure Disk Storageoption specifies the location of the persistent storage used by Couchbase Server. The setting affects only this node and sets the directory where all the data will be stored on disk. This will also set where the indices created by views will be stored. If you are not indexing data with views you can accept the default setting. For the best performance, you may want to configure two different disks for storing your document and index data.The

Configure Server Memorysection sets the amount of physical RAM that will be allocated by Couchbase Server for storage.If you are creating a new cluster, this is the amount of memory that will be allocated on each node within your Couchbase cluster. The memory for each node in a cluster must be the same amount. You must specify a value that can be supported by all the nodes in your cluster as this setting will apply to the entire cluster.

The default value is 60% of your total free RAM. This figure is designed to allow RAM capacity for use by the operating system caching layer when accessing and using views.

-

Provide a node IP or hostname under Configure Server Hostname. For more details about using hostnames see Using Hostnames with Couchbase Server.

-

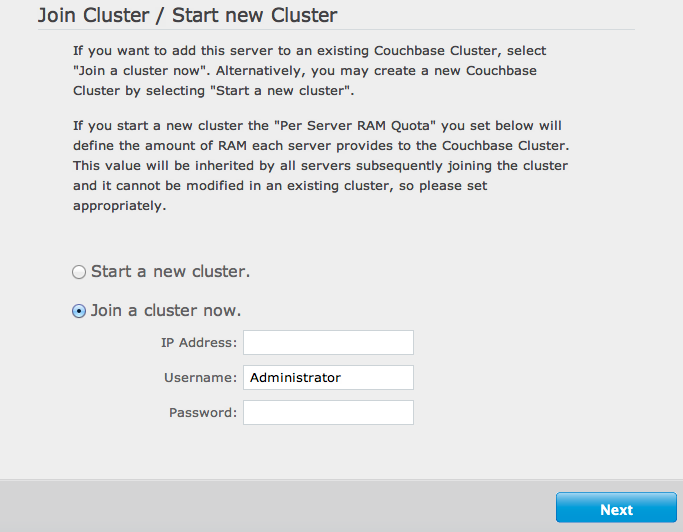

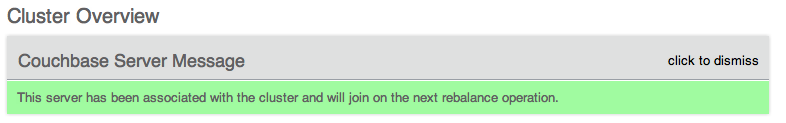

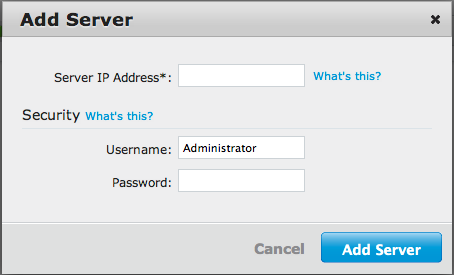

To join an existing cluster, Click Join a cluster now.

Provide the IP Address or hostname of an existing node, and administrative credentials for that existing cluster.

-

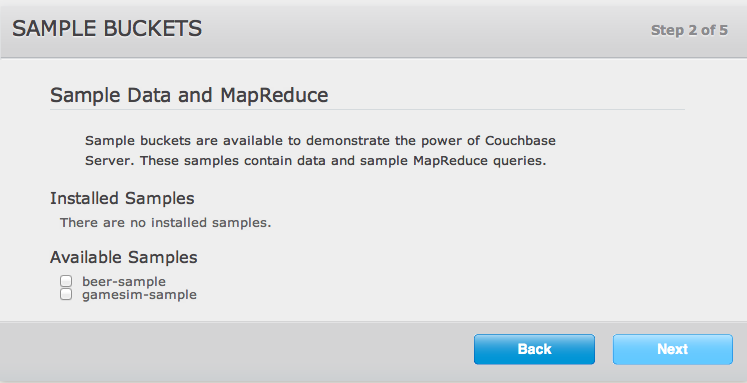

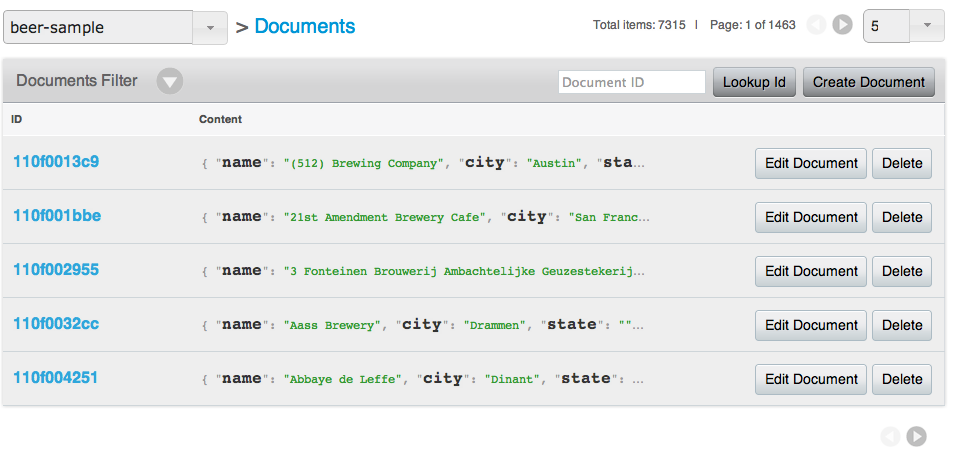

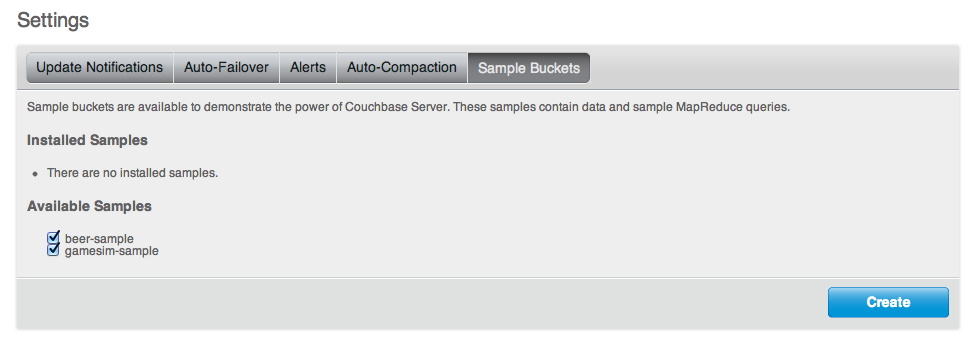

Click on the name of a sample bucket to load Couchbase Server with sample data. This demonstrates Couchbase Server and help you understand and develop views. If you decide to install sample data, the installer creates one Couchbase bucket for each set of sample data you choose.

For more information on the contents of the sample buckets, see Couchbase Sample Buckets.

-

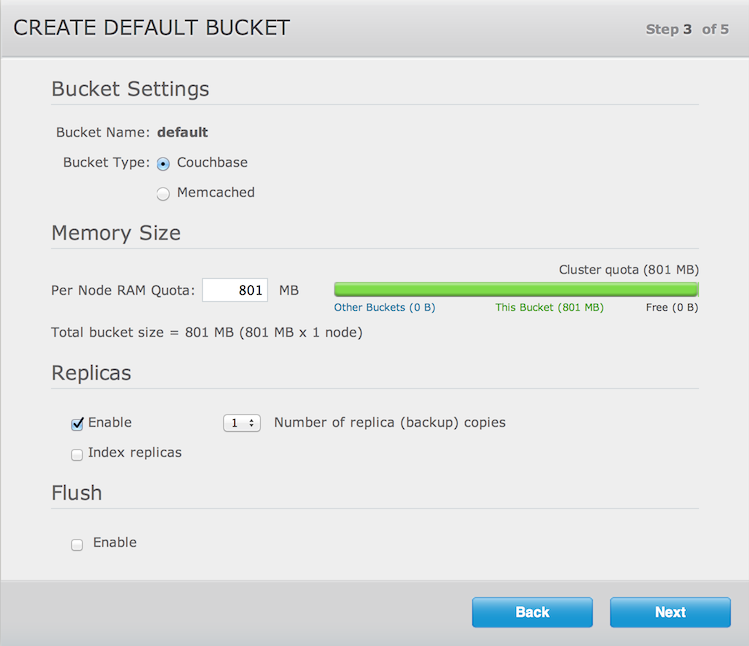

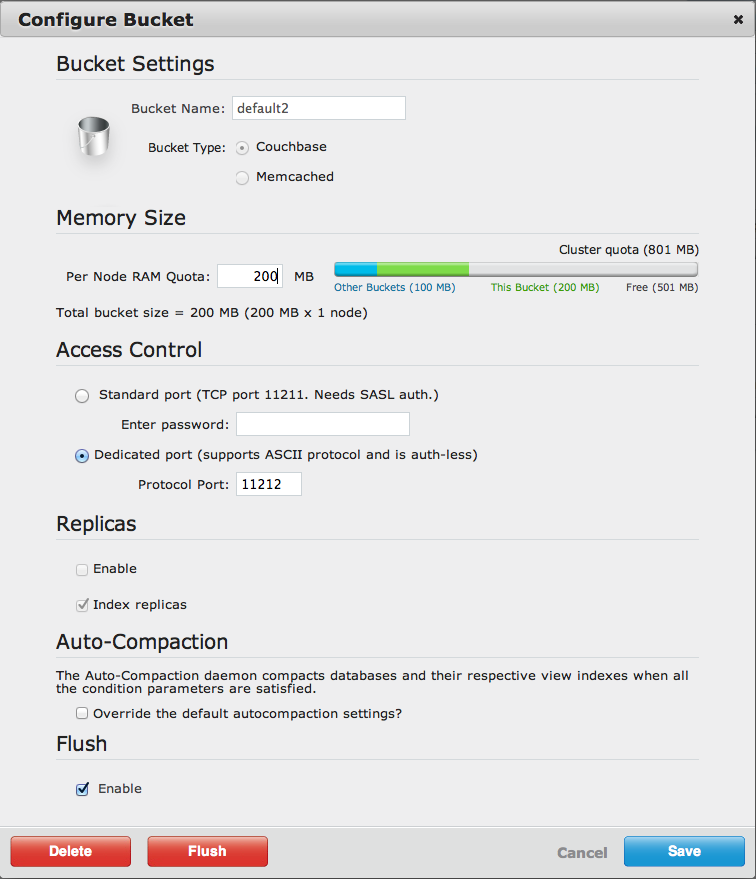

Set up a default bucket for Couchbase Server. You can change the bucket settings later. Note that you cannot change the bucket name after you create it.

Default Bucket Should Only for Testing¶

The default bucket should not be used for storing live application data; you should create a bucket specifically for your application. The default bucket should only be used for testing.

The options are:

-

Bucket Type

The type of the bucket, either

MemcachedorCouchbase. See Data Storage for more information. The remainder of the options differ based on your selection.For

Couchbasebucket type:-

Memory Size

The amount of available RAM on this server which should be allocated to the bucket.

-

Replicas

For Couchbase buckets you can enable data replication so that the data is copied to other nodes in a cluster. You can configure up to three replicas per bucket. If you set this to one, you need to have a minimum of two nodes in your cluster and so forth. If a node in a cluster fails, after you perform failover, the replicated data will be made available on a functioning node. This provides continuous cluster operations in spite of machine failure. For more information, see Failing Over Nodes.

You can disable replication by deselecting the

Enablecheckbox.To configure replicas, Select a number in

Number of replica (backup) copiesdrop-down list.To enable replica indexes, Select the

Index replicascheckbox. Couchbase Server can also create replicas of indexes. This ensures that indexes do not need to be rebuilt in the event of a node failure. This will increase network load as the index information is replicated along with the data. -

Flush

To enable the operation for a bucket, click the

Enablecheckbox. Enable or disable support for the Flush command, which deletes all the data in an a bucket. The default is for the flush operation to be disabled.

For

Memcachedbucket type:-

Memory Size

The bucket is configured with a per-node amount of memory. Total bucket memory will change as nodes are added/removed.

For more information, see RAM Sizing.

-

-

-

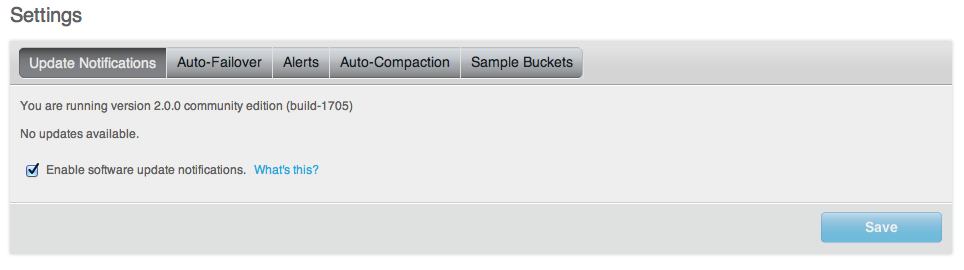

Select

Update Notifications, and Couchbase Web Console will communicate with Couchbase nodes and confirm the version numbers of each node. During this process, the client sends the following information to Couchbase Server:The current version. When a new version of Couchbase Server exists, you get information on where you can download the new version.

Information about the size and configuration of your Couchbase cluster. This information helps us prioritize our development efforts.

As long as you have internet access, the information will be sent. The update notification process provides the information anonymously. Couchbase only uses this information to provide you with updates and information that will help us improve Couchbase Server and related products.

When you provide an email address we will add it to the Couchbase community mailing list, which will provide you with news and update information about Couchbase and related products. You can unsubscribe from the mailing list at any time using the unsubscribe link provided in each email communication.

Enter a username and password. The password must be at least six characters in length. You use these credentials each time you add a new server into the cluster. The are also the same credentials for Couchbase REST API. See, Using the REST API.

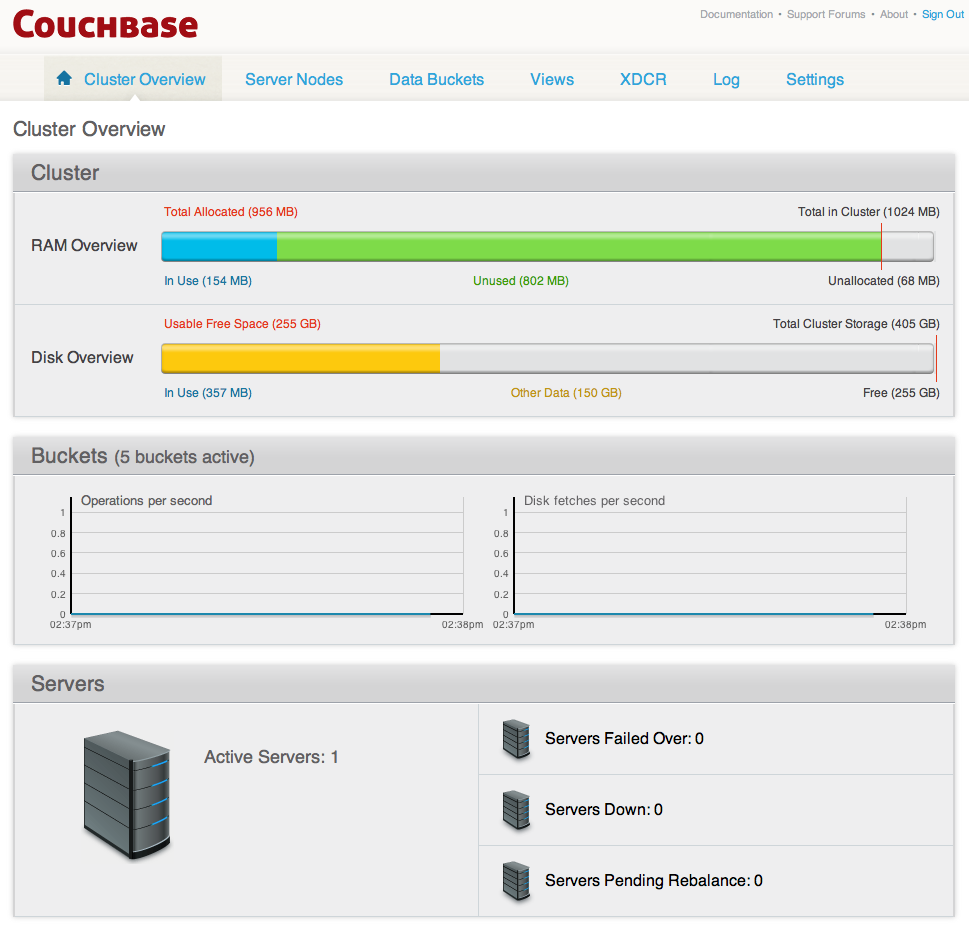

Once you finish this setup, you see Couchbase Web Console with the Cluster Overview page:

Your server is now running and ready to use. After you install your server and finish initial setup you can also optionally configure other settings, such as the port, RAM, using any of the following methods:

-

Using command-line tools

The command line tools provided with your Couchbase Server installation includes

couchbase-cli. This tool provides access to the core functionality of the Couchbase Server by providing a wrapper to the REST API. Seecluster initialization. -

Using the REST API

Couchbase Server can be configured and controlled using a REST API. In fact, the REST API is the basis for both the command-line tools and Web interface to Couchbase Server.

For more information on using the REST API to provision and setup your node, see Provisioning a Node.

Using Hostnames with Couchbase Server¶

When you first install Couchbase Server you can access using a default IP address. There may be cases where you want to provide a hostname for each instance of a server. Each hostname you provide should be a valid one and will ultimately resolve to a valid IP Address. This section describes how you provide hostnames on Windows and Linux for the different versions of Couchbase Server. If you restart a node, it will use the hostname once again. If you failover or remove a node from a cluster, the node you should configure the hostname once again.

In the Cloud (such as EC2, Azure, etc). For more information about handling IP addresses and hostnames, see Handling Changes in IP Addresses.

Hostnames for Couchbase Server 2.0.1 and Earlier¶

For 2.0.1 please follow the same steps for 2.0 and earlier.

For Linux 2.0.1 and Earlier:

Install Couchbase Server.

-

Execute:

sudo /etc/init.d/couchbase-server stop -

Edit the

start()function in the script located at/opt/couchbase/bin/couchbase-server.Under the line that reads:

-run ns_bootstrap – \Add a new line that reads:

-name ns_1@hostname \Where

hostnameis either a DNS name or an IP address that you want this server to identify the node (the ‘ns_1@’ prefix is mandatory). For example:… -run ns_bootstrap – \ -name ns_1@couchbase1.company.com \ -ns_server config_path ""/opt/couchbase/etc/couchbase/static_config"" \ … -

Edit the IP address configuration file.

This is located at

/opt/couchbase/var/lib/couchbase/ip. This file contains the identified IP address of the node once it is part of a cluster. Open the file, and add a single line containing thehostname, as configured in the previous step. -

Delete the files under:

/opt/couchbase/var/lib/couchbase/data/*/opt/couchbase/var/lib/couchbase/mnesia/*/opt/couchbase/var/lib/couchbase/config/config.dat

-

Execute:

sudo /etc/init.d/couchbase-server start You can see the correctly identified node as the hostname under the Manage Servers page. You will again see the setup wizard since the configuration was cleared out; but after completing the wizard the node will be properly identified.

For Windows 2.0.1 and Earlier :

Install Couchbase Server.

-

Stop the service by running:

shell> C:\Program Files\Couchbase\Server\bin\service_stop.bat -

Unregister the service by running:

shell> C:\Program Files\Couchbase\Server\bin\service_unregister.bat -

For 2.0, edit the script located at

C:\Program Files\Couchbase\Server\bin\service_register.bat.On the 7th line it says:

set NS_NAME=ns_1@%IP_ADDR%Replace

%IP_ADDR%with the hostname/IP address that you want to use.

-

Edit the IP address configuration file.

Edit

C:\Program Files\Couchbase\Server\var\lib\couchbase\ip. This file contains the identified IP address of the node once it is part of a cluster. Open the file, and add a single line containing thehostname, as configured in the previous step. Register the service by running the modified script:

C:\Program Files\Couchbase\Server\bin\service_register.batDelete the files located under:

C:\Program Files\Couchbase\Server\var\lib\couchbase\mnesia.-

Start the service by running:

shell> C:\Program Files\Couchbase\Server\bin\service_start.bat See the node correctly identifying itself as the hostname in the GUI under the Manage Servers page. Note you will be taken back to the setup wizard since the configuration was cleared out, but after completing the wizard the node will be named properly.

Upgrading to Couchbase Server 2.0.x¶

The following are officially supported upgrade paths for Couchbase Server for both online upgrades or offline upgrades:

- Couchbase 1.8.1 to Couchbase 2.0.x and above

- Couchbase 2.0 to Couchbase 2.0.x and above

If you want to upgrade from 1.8.0 to 2.0.x, you must have enough disk space available for both your original Couchbase Server 1.8 data files and the new format for Couchbase Server 2.0 files. You will also need additional disk space for new functions such as indexing and compaction. You will need approximately three times the disk space.

Direct upgrades from Couchbase Server 1.8.0 to 2.0 or earlier releases are not supported. You must first upgrade to Couchbase Server 1.8.1 to provide data compatibility with Couchbase Server 2.0 +.

You can perform a cluster upgrade in two ways:

-

Online Upgrades