Sample Application

- tutorial

Discover how to program interactions with Couchbase via the Data, Query, and Search services. Discover how to program interactions with Couchbase Server 7.X via the Data, Query, and Search services — using the Travel Sample Application with the built-in Travel Sample data Bucket.

Quick Start

Fetch the Couchbase Java SDK travel-sample Application REST Backend from github:

git clone https://github.com/couchbaselabs/try-cb-java.git

cd try-cb-javaWith Docker installed, you should now be able to run a bare-bones copy of Couchbase Server, load the travel-sample, add indexes, install the sample-application and its frontend, all by running a single command:

docker-compose --profile local upRunning the code against your own development Couchbase server.

For Couchbase Server 7.6, make sure that you have at least one node each of data; query; index; and search. For a development box, mixing more than one of these on a single node (given enough memory resources) is perfectly acceptable.

If you have yet to install Couchbase Server in your development environment start here.

Then load up the Travel Sample Bucket, using either the Web interface or the command line. You will also need to create a Search Index — Query indexes are taken care of by the Sample Bucket.

See the README at https://github.com/couchbaselabs/try-cb-java for full details of how to run and tweak the Java SDK travel-sample app.

Using the Sample App

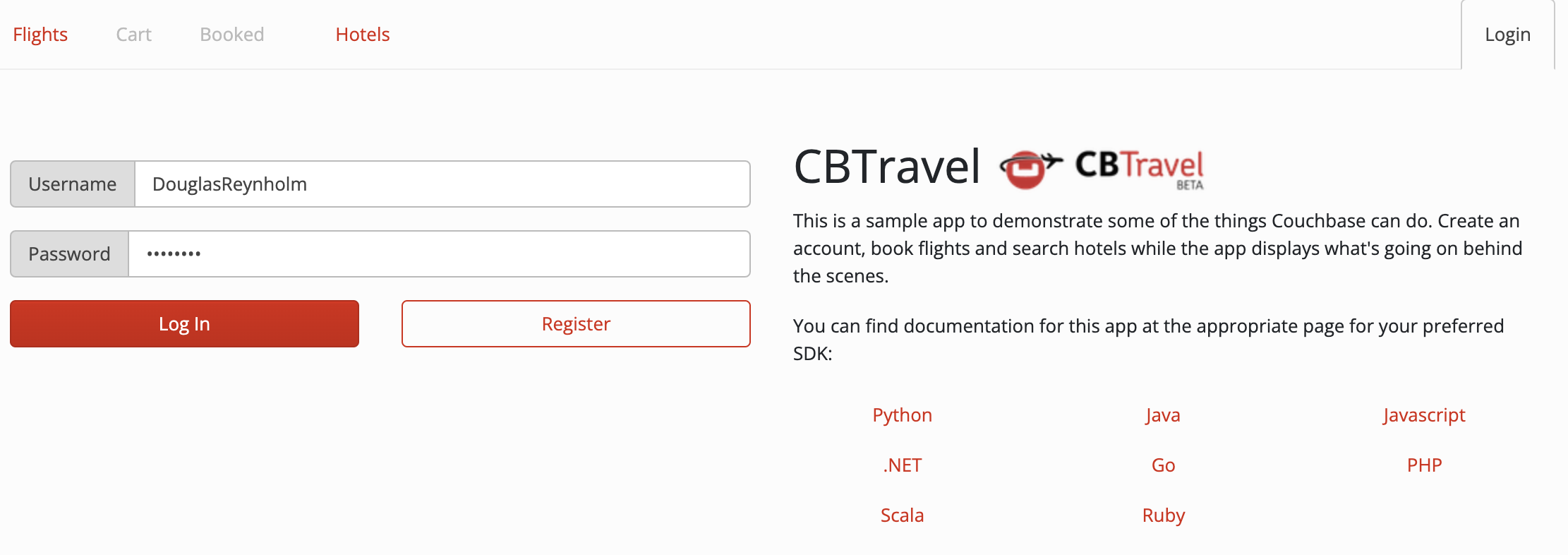

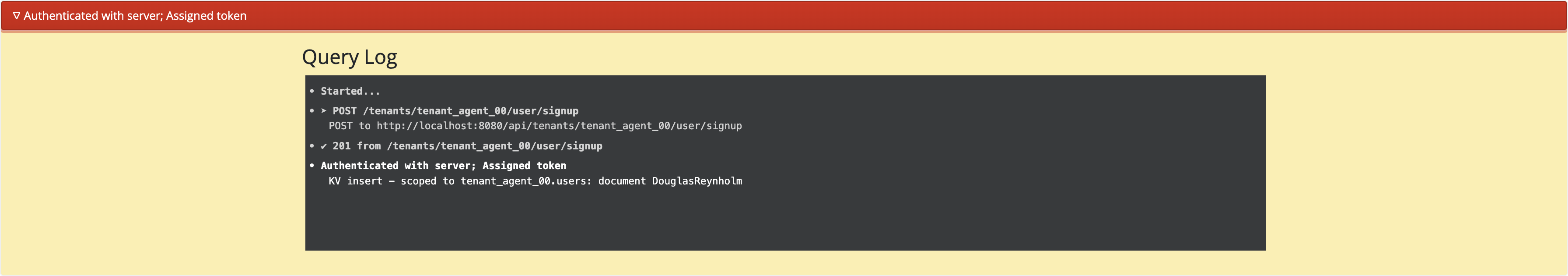

Give yourself a username and password and click Register.

You can now try out searching for flights, booking flights, and searching for hotels. You can see which Couchbase SDK operations are being executed by clicking the red bar at the bottom of the screen:

Sample App Backend

The backend code shows Couchbase Java SDK in action with Query and Search,

but also how to plug together all of the elements and build an application with Couchbase Server and the Java SDK.

Look at TenantUser.java to see some of the pieces necessary in most applications, such as the TenantUser @Service:

@Service

public class TenantUser {

private final TokenService jwtService;

@Autowired

public TenantUser(TokenService jwtService) {

this.jwtService = jwtService;

}

static final String USERS_COLLECTION_NAME = "users";

static final String BOOKINGS_COLLECTION_NAME = "bookings";Creating a user shows the typical security concerns, with salted password hashes, as well as the mundane but essential business of using the KV interface to insert the username into the database:

public Result<Map<String, Object>> createLogin(final Bucket bucket, final String tenant, final String username,

final String password, DurabilityLevel expiry) {

String passHash = BCrypt.hashpw(password, BCrypt.gensalt());

JsonObject doc = JsonObject.create()

.put("type", "user")

.put("name", username)

.put("password", passHash);

InsertOptions options = insertOptions();

if (expiry.ordinal() > 0) {

options.durability(expiry);

}

Scope scope = bucket.scope(tenant);

Collection collection = scope.collection(USERS_COLLECTION_NAME);

String queryType = String.format("KV insert - scoped to %s.users: document %s", scope.name(), username);

try {

collection.insert(username, doc, options);

Map<String, Object> data = JsonObject.create().put("token", jwtService.buildToken(username)).toMap();

return Result.of(data, queryType);

} catch (Exception e) {

e.printStackTrace();

throw new AuthenticationServiceException("There was an error creating account");

}

}Here, the flights array, containing the flight IDs, is converted to actual objects:

List<Map<String, Object>> results = new ArrayList<Map<String, Object>>();

for (int i = 0; i < flights.size(); i++) {

String flightId = flights.getString(i);

GetResult res;

try {

res = bookingsCollection.get(flightId);

} catch (DocumentNotFoundException ex) {

throw new RuntimeException("Unable to retrieve flight id " + flightId);

}

Map<String, Object> flight = res.contentAsObject().toMap();

results.add(flight);

}

String queryType = String.format("KV get - scoped to %s.user: for %d bookings in document %s", scope.name(),

results.size(), username);

return Result.of(results, queryType);

}Data Model

See the Travel App Data Model reference page for more information about the sample data set used.